Every high-profile breach of the last decade traces back to a decision — or a non-decision — made somewhere in the software development lifecycle. The credential that was hardcoded in the repo. The input that was never validated. The dependency that stayed unpatched. The log line that never fired. A secure sdlc is not a different process from the one your engineering organization already runs; it is the same process with security treated as a first-class concern in each phase, not a gate bolted onto the end. This guide walks the full shape of a secure software development lifecycle in 2026: the six phases, the frameworks worth knowing (Microsoft SDL, NIST SSDF, OWASP SAMM, BSIMM), the activities that matter in each phase, the tooling that has matured and the tooling that has not, and the rollout pattern that actually takes hold in engineering organizations without triggering the usual immune response.

What Is a Secure SDLC (Security SDLC)?

A software development lifecycle is the set of practices an engineering organization uses to move an idea from concept to shipped code to operated service. A security sdlc — synonymous in modern usage with "secure SDLC" — is the same lifecycle with security controls, reviews, and feedback loops integrated into every phase. The distinction from a traditional SDLC is not that security exists (traditional SDLCs often had security at the end, as a review gate) but that security is present from the first requirements conversation to the post-incident retrospective, and each phase produces security artifacts that feed into the next.

The historical framing is worth understanding because it shapes how engineering leaders react to the phrase. Through the 1990s and early 2000s, security was typically a late-stage review — a team of specialists descended on the project before production release, found problems, filed bugs, and either the release shipped late or the bugs rolled to the next release. Microsoft's response to the Code Red and Nimda incidents in 2001-2002 produced the Security Development Lifecycle (SDL), which was the first widely adopted model proposing security activities throughout the lifecycle rather than at the end. Twenty-plus years later, the terminology has broadened — NIST SSDF, OWASP SAMM, BSIMM, various DevSecOps frameworks — but the core idea is the same: security is not a gate, it is a property of the process. The role that operationalizes the secure SDLC inside a modern engineering organization is increasingly named explicitly: DevSecOps engineering, with its own toolchain, on-call patterns, and career path.

The practical payoff is measurable. Industry data from the past decade consistently shows that a vulnerability found in the requirements phase costs a fraction of what the same vulnerability costs to fix post-production. IBM's classic study put the ratio at roughly 100:1 between design-phase and production-phase remediation cost; more recent work from Ponemon and the Consortium for IT Software Quality broadly confirms the same order of magnitude. A secure SDLC is, economically, a cost-avoidance program. It is also, in the current compliance landscape, an expectation — frameworks from PCI DSS to SOC 2 to the EU Cyber Resilience Act all contain language that presumes a secure software development process exists and produces evidence.

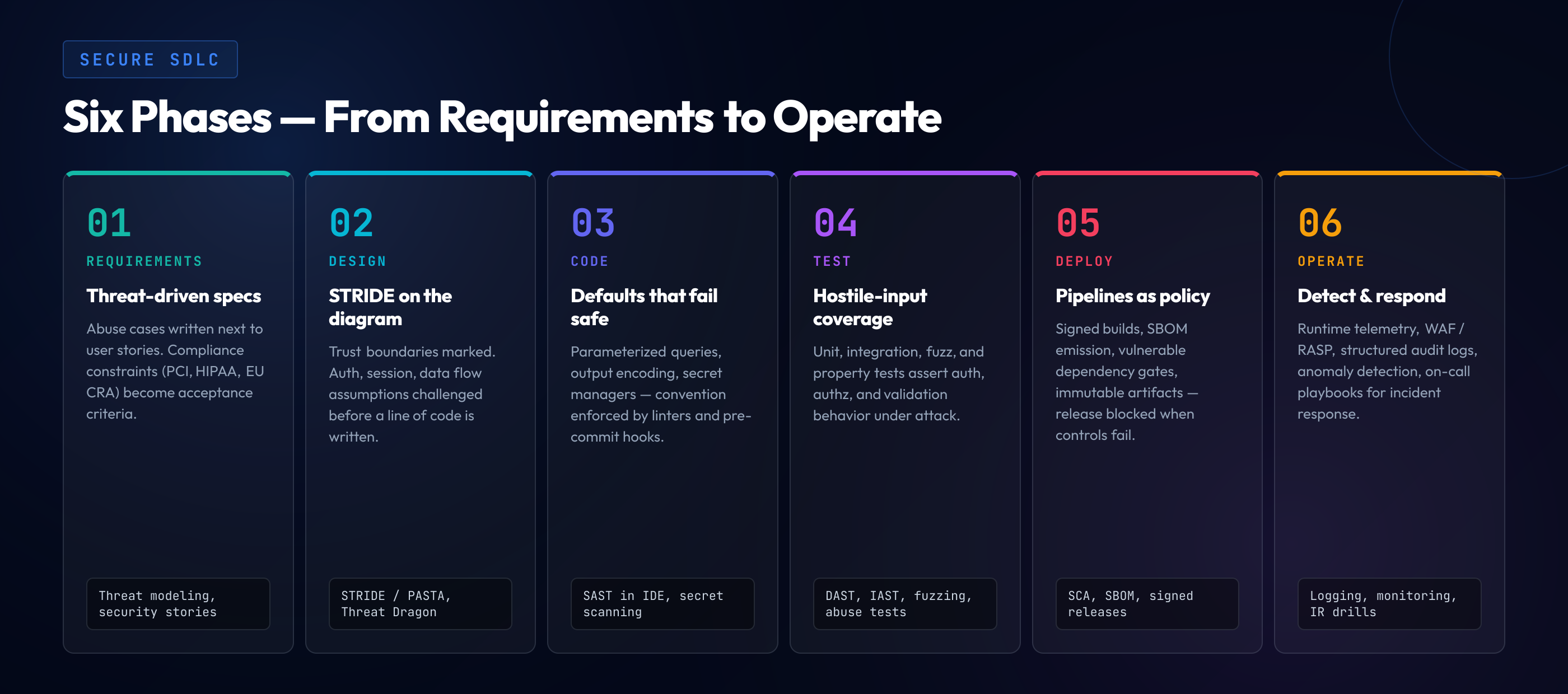

The Six Phases of a Secure SDLC

Different frameworks draw the phase boundaries differently, but the substance converges on six phases. Calling them by these names is less important than ensuring each set of activities actually happens.

| Phase | Core activity | Primary artifact |

|---|---|---|

| Requirements | Identify security requirements, risk context, regulatory scope | Security requirements document |

| Design | Threat modeling, architectural security review, trust-boundary analysis | Threat model and design review |

| Implementation | Secure coding, code review, developer enablement | Code with SAST-clean status and reviewed PRs |

| Verification | SAST, DAST, IAST, SCA, penetration testing | Tool scan results + pentest report |

| Release | Secure deployment, configuration review, release gates | Release attestation + runtime config |

| Response | Monitoring, incident response, post-incident feedback | Incident records + curriculum updates |

The six phases are not a waterfall. A modern engineering organization running sprints or continuous deployment moves through all six phases on an ongoing basis — requirements for the next feature happen in parallel with verification of the last feature and response activities on the service in production. The "phases" are perspectives on the same flow of work, not sequential stages. What the secure SDLC discipline requires is that each perspective receives the attention it needs, which usually means dedicated activities and artifacts rather than the assumption that general engineering rigor covers security.

Secure SDLC Frameworks Compared

Four frameworks dominate the conversation in 2026, and the differences among them are large enough to drive the choice of which one an organization actually adopts. The frameworks are not mutually exclusive — most mature programs reference multiple — but a primary frame of reference is worth picking.

Microsoft Security Development Lifecycle (SDL). The microsoft security development lifecycle — or microsoft sdl as it is commonly abbreviated — is the grandparent of all modern secure SDLC frameworks. Published externally starting in 2004, the SDL prescribes specific practices for each phase: security training, security requirements, threat modeling, secure coding guidelines, static analysis, dynamic analysis, attack surface review, penetration testing, incident response planning. The framework is prescriptive; Microsoft published the specific activities, the required artifacts, and the criteria for each. That prescriptiveness is both its strength (the activities are concrete) and its weakness (the framework reflects Microsoft's organizational context and does not always translate directly to other environments). For an organization that wants a step-by-step pattern to follow, the SDL remains the most detailed public framework.

NIST Secure Software Development Framework (SSDF, SP 800-218). The most recent of the major frameworks, published in its current form in 2022 with updates in 2023 and 2025. The SSDF is deliberately less prescriptive than the SDL — it defines a set of practices organized into four groups (Prepare the Organization, Protect the Software, Produce Well-Secured Software, Respond to Vulnerabilities) and leaves implementation detail to the organization. The framing that distinguishes the SSDF is its emphasis on producing software that is resistant to tampering along the supply chain, which reflects the post-SolarWinds landscape and the 2021 executive order on supply chain security. In 2026, the SSDF is the framework most likely to be cited by federal contracting language and is increasingly referenced in private-sector procurement as well.

OWASP SAMM (Software Assurance Maturity Model). An open-source framework structured as a maturity model rather than a practice list. SAMM defines five business functions (Governance, Design, Implementation, Verification, Operations) with three security practices each, each practice having three maturity levels. An organization assesses its current level in each practice, sets a target level, and plans specific activities to close the gap. The maturity-model framing makes SAMM particularly useful for organizations that want to benchmark themselves and set incremental improvement goals without committing to the full prescription of the SDL. It pairs well with BSIMM as the measurement framework against a broader industry benchmark.

BSIMM (Building Security In Maturity Model). A descriptive framework — not prescriptive — assembled from observed practices at dozens of participating organizations and published annually by Synopsys. BSIMM does not tell an organization what to do; it tells the organization what peer organizations are doing, organized into four domains and twelve practices. The value of BSIMM is comparative: an organization can see where it falls relative to its industry's practices and identify areas where it is behind or ahead of peers. It is most useful as a planning tool and a conversation starter with leadership rather than as an execution framework.

The practical pattern in 2026 is usually to pick SAMM or NIST SSDF as the primary frame, reference the SDL for the specific activity prescriptions in each phase, and use BSIMM annually to benchmark. Organizations that adopt one framework in isolation tend to hit its limits; organizations that reference multiple tend to produce programs that match their operational reality rather than any single framework's implicit assumptions.

Phase 1: Security Requirements Gathering

The first phase of a secure SDLC is also the cheapest place to prevent vulnerabilities that would otherwise become expensive later. Security requirements are the counterpart to functional requirements: for every feature, there are implicit or explicit security expectations, and writing them down early turns them from assumed-knowledge disputes into designable properties.

A complete security requirements set covers three layers. The first is the regulatory layer — PCI DSS if the system processes cards, HIPAA for health information, GDPR or CCPA for personal data, the EU Cyber Resilience Act for connected products sold in the EU, sector-specific rules where applicable. The second is the organizational layer — the security policies the company itself has committed to, including internal standards for authentication, cryptography, logging, and data handling. The third is the feature-specific layer — the security behavior this particular feature is supposed to exhibit, which is usually expressed as abuse cases and negative requirements (the things the system must not do).

Abuse cases. For each user story, write at least one abuse case — a short description of what an attacker would try. The payment form has a "user enters card and submits" user story; the corresponding abuse case is "attacker submits malformed inputs attempting injection, replay, or CSRF" and the requirement is that the server rejects or handles these patterns safely. Abuse cases are not threat models — they are much shorter — but they bridge from feature requirements into security-conscious thinking during the requirements phase itself.

Data classification. What data will this feature handle, and what is the sensitivity class of each? Public, internal, confidential, restricted. The classification drives the requirements for encryption at rest and in transit, logging behavior, access control, and retention. A feature that handles restricted data (payment card data, health records, credentials) has a much longer requirements list than one handling public data, and the difference has to be recognized upstream.

Trust boundary identification. Where does data cross from one trust zone to another? Client to server, internet-facing service to internal service, authenticated user to privileged operation. Each boundary is a location where security requirements concentrate — authentication, authorization, input validation, output encoding, rate limiting. The boundaries identified in the requirements phase become the focus of the threat modeling in the next phase.

Phase 2: Threat Modeling and Secure Design

Threat modeling is the activity that distinguishes a serious secure SDLC from a checklist-driven one. The goal is to analyze the design before it is implemented, identify the ways it can go wrong, and adjust the design to make those ways harder. When done well, threat modeling prevents entire classes of vulnerability from being introduced in the first place — which is orders of magnitude cheaper than finding and fixing them later. The frameworks and tooling below are introduced here as orientation; for a deeper walkthrough of each framework, session mechanics, and automation patterns, see our threat modeling guide for developers.

STRIDE. Microsoft's framework for categorizing threats: Spoofing identity, Tampering with data, Repudiation, Information disclosure, Denial of service, Elevation of privilege. For each component of the design, walk each category and ask what could go wrong. STRIDE is the most widely adopted framework for developer-driven threat modeling because it maps cleanly onto the kinds of questions a developer can answer about their own design.

PASTA (Process for Attack Simulation and Threat Analysis). A more rigorous, risk-centric framework with seven stages. PASTA is heavier than STRIDE and typically applied to systems with material risk exposure (payment systems, authentication infrastructure, systems handling restricted data). The stages move from business objectives through application decomposition, threat analysis, vulnerability analysis, attack modeling, and risk assessment. For most engineering teams, PASTA is the framework the security team uses on high-stakes reviews rather than the one every team applies to every feature.

LINDDUN. The privacy-focused analogue to STRIDE: Linkability, Identifiability, Non-repudiation, Detectability, Disclosure, Unawareness, Non-compliance. Useful where privacy threats dominate the risk profile — systems handling personal data under GDPR, CCPA, or equivalent regimes.

The threat modeling session. A typical team-scale threat modeling session runs 60-90 minutes and produces a short document: system diagram, trust boundaries marked, STRIDE threats per component, mitigations for each. The session is most valuable when it happens during design — before implementation starts, while changing the design is cheap. Organizations that compress threat modeling into a post-implementation activity get less value because the design decisions are already made and the team's attention is on shipping, not redesigning.

Tooling. Microsoft Threat Modeling Tool is the free legacy option. OWASP Threat Dragon is the modern open-source equivalent. IriusRisk, SD Elements, and ThreatModeler are commercial options with automation and integration. For most teams in 2026, a whiteboard or a collaborative diagram and a team conversation produces more value than sophisticated tooling — the tool matters less than the cultural habit of doing the analysis.

Phase 3: Secure Coding and Code Review

The implementation phase is where the developer meets the security requirements and threat model in practice. Two activities dominate: writing code that implements security correctly, and reviewing code that others have written for security defects. Both are harder than they look, and both depend heavily on developer skill that has to be actively cultivated rather than assumed.

Secure coding standards. The organization publishes a set of language-specific standards that describe how security-relevant operations must be implemented. Parameterized queries for SQL, output encoding for HTML, framework-specific authentication patterns, cryptography library choices and configurations. Standards that exist only as documents get read once and forgotten; standards that exist as lint rules, SAST rules, and code-review templates shape the code that actually gets written.

Developer training. The humans writing the code need fluency in the vulnerability classes the code is exposed to — in the specific languages they use. Generic "watch out for injection" training is not sufficient in 2026; developer education has to be language-specific, hands-on, and continuously updated. For teams operating under PCI DSS, the training requirement is formalized in Requirement 6.2.2's secure coding training clause, which prescribes language-specific, role-relevant training with per-developer evidence. Teams outside of PCI scope still benefit from the same discipline; the difference is whether it is a compliance obligation or a productivity investment.

Code review. Every change that touches security-relevant code gets reviewed by at least one other engineer, with an explicit security lens. The review is not a second implementation; it is a structured question-answer process — does this input validate correctly, does this authentication check handle the negative cases, does this cryptographic call use the current recommended algorithm. A checklist tied to the organization's standards keeps reviews consistent. The practice of secure code review is itself a skill that scales with deliberate cultivation, not one that appears automatically once the PR template has a checkbox.

AI-assisted development. In 2026, a meaningful fraction of code entering production began as an AI suggestion — from Copilot, Claude, Cursor, or another coding assistant. The secure SDLC has to account for this: the developer accepting an AI suggestion is responsible for its security, and review practices need to specifically address the vulnerability patterns common in AI output. A code review checklist tuned to AI-generated code turns what would otherwise be a new source of risk into a structured review activity.

The OWASP Top 10 as a curriculum anchor. The OWASP Top 10 is the single most effective way to structure a developer-facing secure coding curriculum because it reflects the vulnerability classes that actually appear in production code at the highest frequency. A developer fluent in the current OWASP Top 10 and its evolution has the mental model to recognize most of the patterns that threat models will flag and code reviews will catch. The Top 10 is not exhaustive, but it is the highest-value starting point.

Phase 4: Security Testing

Verification is the phase where tools do the repetitive work humans cannot do at scale. A mature secure SDLC runs multiple tool classes against every change, integrates the results into the engineer's workflow, and uses the results to tune the upstream practices in requirements, design, and implementation.

Static Application Security Testing (SAST). Tools that analyze source code without executing it, looking for known vulnerability patterns. Modern SAST tools (Semgrep, CodeQL, SonarQube, Checkmarx, Snyk Code, Veracode Static Analysis) have largely solved the false-positive problem that plagued the category a decade ago, and the best tools now produce per-language findings with enough context that developers can act on them directly. SAST is the tool that belongs in every pull request — fast, shift-left, language-specific.

Dynamic Application Security Testing (DAST). Tools that test running applications by probing endpoints, sending malformed inputs, and observing responses. DAST finds categories that SAST misses — configuration issues, runtime authentication flaws, certain injection patterns — and is complementary rather than redundant. Modern DAST tools (OWASP ZAP, Burp Suite Enterprise, Invicti, StackHawk, Bright Security) integrate into CI pipelines and run against staging environments on every build.

Interactive Application Security Testing (IAST). A hybrid category that instruments the running application and observes code paths triggered by test traffic. IAST tools produce higher-fidelity findings than either SAST or DAST alone because they see both the code and the runtime context, at the cost of requiring test traffic that actually exercises the security-relevant paths. Contrast Security and Checkmarx IAST are the named players; the category is less mature than SAST or DAST but is growing. For a deeper walkthrough of how SAST, DAST, and IAST differ in what they actually catch, where each fits in the developer loop, and the starter combination for teams building out an AppSec program, see our SAST vs DAST vs IAST comparison guide.

Software Composition Analysis (SCA). Tools that identify the open-source and third-party dependencies in a codebase and check them against known vulnerability databases. SCA is the category that has become essential post-Log4Shell and post-SolarWinds — the supply chain threat model means that a dependency without a known CVE today can have one tomorrow, and continuous monitoring is the response. Modern SCA tools (Snyk, Dependabot, Mend, Sonatype, GitHub's native dependency graph) produce both vulnerability findings and license findings and integrate into the PR workflow.

Penetration testing. Human-driven adversarial testing against the system, typically annually for in-scope systems and after material changes. Penetration testing finds what tools miss — business logic flaws, chained vulnerabilities, environmental issues. The report's value depends heavily on the tester's depth; a good pentest produces findings that the team learns from, a bad pentest produces a list of CVEs the SCA tool already flagged.

The point of running multiple tool classes is coverage, not redundancy. Each class finds categories the others miss, and the combined output, integrated into the engineer's day-to-day workflow, keeps the defect rate down without turning security into a separate workflow the engineer has to context-switch into.

Phase 5: Secure Deployment and Release Management

Code that is secure in the repository is not automatically secure in production. The deployment phase is where configuration meets code, where the attack surface becomes live, and where a surprising fraction of production vulnerabilities actually originate — not in the code but in how the code was deployed.

Configuration security. The production configuration has to match the security assumptions the code was written against. TLS enforcement, authentication defaults, logging configuration, secret management. A codebase that expects to be deployed behind TLS but is deployed on plain HTTP is a vulnerability created in deployment, not in code. Infrastructure-as-Code review — applying the same review discipline to Terraform, CloudFormation, or Kubernetes manifests that applies to application code — is the practice that catches these.

Secret management. Credentials, API keys, encryption keys, certificates. The practice is to hold these in a dedicated secret manager (HashiCorp Vault, AWS Secrets Manager, Azure Key Vault, Google Secret Manager, Doppler, Infisical) and inject them at runtime, never commit them to the repository, and rotate them on a documented cadence. Teams that manage secrets through environment variables loaded from config files tend to accumulate secret sprawl that becomes nearly impossible to audit.

Release gates. The deployment pipeline has checkpoints that block release if security criteria are not met. SAST findings above a threshold, SCA findings for critical CVEs, missing required approvals, unsigned artifacts. A release gate that blocks nothing in practice is a cultural artifact, not a control; a release gate that blocks frequently without clear remediation paths becomes the thing developers route around. The balance is gates tuned to material risk with defined exception processes.

Build provenance and supply chain integrity. Post-SolarWinds, post-XZ-Utils, the security community has invested heavily in build provenance — the ability to cryptographically verify that the artifact deployed to production came from the source code in the repository, built in the expected environment, without tampering. SLSA (Supply-chain Levels for Software Artifacts) is the emerging standard; Sigstore is the tooling ecosystem. In 2026, build provenance is moving from "leading practice" to "table stakes for systems with material risk exposure".

Deployment scanning. The deployment environment itself needs vulnerability scanning, separate from the application code. Container images scanned for vulnerable base images, Kubernetes configurations reviewed for policy compliance, cloud resources audited against CIS benchmarks. This is the infrastructure-side counterpart to application scanning and is often the gap in organizations that have mature application-side practices but treat infrastructure as plumbing.

Phase 6: Incident Response and Continuous Monitoring

A system in production is continuously exposed. The response phase is where the secure SDLC closes its loop: monitoring detects attacks and anomalies, incident response contains and remediates them, and the organization learns from each incident to harden the upstream phases. A secure SDLC that skips the response phase is a program that prevents the previous attack pattern but never improves against the next one.

Logging and telemetry. Every security-relevant event produces a log entry: authentication attempts (successful and failed), authorization decisions, privileged operations, configuration changes, data access patterns. The logs are aggregated in a system-of-record where they can be queried by the incident response team in real time and retained for the required periods. Under PCI DSS, logs are retained at least one year with the most recent three months immediately available; under HIPAA, six years; under GDPR, varies by data category. The retention policy is a deployment decision with compliance implications, not a nice-to-have.

Detection engineering. Logs become useful when they trigger alerts. Detection engineering is the practice of writing detection rules that surface attacks and anomalies without drowning the response team in false positives. The rules come from threat modeling (we identified this threat, so we alert on its indicators), from incidents (we missed this attack last time, so we alert on it now), and from upstream intelligence (this attack pattern is active in the wild, so we look for it). Detection engineering is now a specialized role at mature organizations; at smaller organizations, it is a shared responsibility between security and site reliability engineering.

Incident response. The process for what happens when a detection fires. Who is paged, who has authority to take containment actions (block an IP, revoke a credential, take a service offline), what the escalation path is, and what the communication plan is. A good incident response plan is practiced — tabletop exercises quarterly, full drill annually — because plans that have never been executed tend to fail on contact with real incidents.

Post-incident review. After every incident, a blameless post-mortem: what happened, what the timeline looked like, what detection fired (or did not), what upstream phase could have prevented this, what changes are required. The post-mortem's outputs feed back into requirements (new security requirement for future features of this type), design (threat model update), implementation (secure coding standard update), training (curriculum update), and detection (new rule). A secure SDLC that does not close this loop is a program that repeats its mistakes.

Vulnerability disclosure. Most mature organizations run a security.txt file (RFC 9116) indicating how external researchers can report vulnerabilities, and many run formal bug bounty programs. The disclosure channel is part of the SDLC's response phase: vulnerabilities reported externally have to flow into the same triage, remediation, and post-incident review process as internally discovered ones.

Secure SDLC and Compliance: The Framework Overlap

The secure SDLC is useful in its own right. It is also the process that produces the evidence most compliance frameworks require. Understanding the overlap saves the compliance team and the engineering team from running two parallel programs that cover substantially the same ground.

PCI DSS 4.0.1. Requirements 6.1 through 6.5 map almost exactly onto SDLC phases — 6.2.1 (documented development practices), 6.2.2 (developer training), 6.2.3 (code review), 6.2.4 (engineering techniques to prevent common attacks), 6.3 (vulnerability management), 6.5 (change management). An organization running a mature secure SDLC is substantially prepared for PCI 6.x; an organization without one is building the evidence from scratch for each assessment.

NIST SSDF. The SSDF is essentially a federal-government specification of a secure SDLC. Organizations that adopt the SSDF as their framework are, by definition, running a secure SDLC; the overlap is total rather than partial.

SOC 2 (CC7.x, CC8.x). The change management and vulnerability management criteria in SOC 2 are secure SDLC activities. The evidence a SOC 2 auditor wants — documented change procedures, testing evidence, deployment records — is the same evidence the SDLC produces as a byproduct of operation.

ISO 27001 Annex A.14. The security in development and support processes controls map directly to SDLC phases. A14.2 in particular reads as a compressed version of a secure SDLC standard.

EU Cyber Resilience Act. Annex I requires secure design and development, vulnerability handling, and supply chain integrity — the CRA's terminology for an SDLC. The regulation's language is broad, but the implementation reduces to the same secure SDLC practices covered above, applied to connected products.

OWASP ASVS 5.0. ASVS is not itself an SDLC framework — it is a verification standard. But in a mature secure SDLC, ASVS is the document the verification phase anchors to: it tells the development team what evidence the testing-and-verification stage must produce, at the level of individual requirements. The ASVS 5.0 developer guide walks the 14 chapters and the L1/L2/L3 levels in depth; what matters in SDLC context is that ASVS verification work fits cleanly into Phase 4 (security testing) and Phase 6 (continuous monitoring), and the evidence the verification produces satisfies PCI 6.3, SOC 2 CC7.x, ISO 27001 A.14.2, and the CRA Annex I requirements as a byproduct. Teams running both an SDLC framework (SAMM or SSDF) and ASVS verification get the broadest single-investment compliance coverage available; running either without the other typically leaves visible evidence gaps.

The practical pattern is to choose a secure SDLC framework (SAMM, SSDF) as the primary organizing frame, map compliance-specific requirements to SDLC phases rather than building parallel compliance structures, and produce the evidence once as SDLC artifacts that serve multiple compliance frameworks. Organizations that run the SDLC and the compliance program as separate streams burn operational time on redundant activities and create evidence inconsistencies that their auditors will find.

Rolling Out a Secure SDLC Without Triggering the Immune Response

The gap between "decide to adopt a secure SDLC" and "have one" is where most programs either succeed or quietly wind down. Engineering organizations have a strong immune response to new processes that feel imposed; programs that fail usually fail for cultural reasons, not technical ones. The rollout pattern that works consistently has five properties.

Start with visibility, not enforcement. Before any new gate blocks any release, the program produces visibility: dashboards showing how many PRs have been reviewed for security, how many services have threat models, how many dependencies have critical CVEs. Visibility creates awareness without creating friction. Teams that see their own data often self-correct before enforcement is needed.

Pair with a team that wants it. Find an engineering team that is already security-curious — the team that had an incident last quarter, the team working on a regulated feature, the team whose tech lead reads security blogs. Roll out the full SDLC pattern with that team first. Document what worked, what did not, what the team's experience was. When you expand to other teams, you have an internal reference story rather than an external pitch.

Integrate with existing tools, do not add new dashboards. Findings go to the existing ticketing system. Scan results go to the existing PR UI. Training assignments go through the existing LMS. Each new dashboard is a surface the engineer has to learn to check, and every surface added increases the probability of the dashboard becoming ignored. Integration into tools engineers already look at keeps the data in front of them without adding new habits.

Tune to the organization's actual vulnerabilities. A generic training curriculum, a generic scanning configuration, a generic code review checklist — all are weaker than versions tuned to the specific vulnerability classes the organization's code actually produces. Analyze the last year of SAST findings, pentest reports, and incidents. The SDLC practices that address those findings get the first investment; the generic best-practice items can follow once the highest-yield items are in place.

Measure what the CFO will ask about. The program needs metrics the business recognizes: defect-escape rate (vulnerabilities found in production as a percentage of total found), mean time to remediate, training completion percentage, compliance audit finding count over time. These are the metrics that survive leadership changes and budget cycles. Metrics that only the security team understands tend to disappear when the security leader changes.

The Common Failure Patterns

Programs that stall usually stall in one of a small number of recognizable ways. Identifying them early is the cheapest defense.

Shelfware frameworks. The organization adopts SAMM or SSDF, writes a policy document, and files it. No activities change in engineering. The framework is a compliance artifact rather than an operational one. The signal is a document that has not been updated since the year it was written.

Security team does everything. The security team writes the threat models, runs the scans, reviews the code, files the tickets. The engineering team is the recipient of security work, not the owner. The pattern does not scale — the security team is always a bottleneck — and it fails to build the engineering capability the program ultimately depends on.

Too many gates, too early. Every phase has a mandatory gate; every gate blocks releases. The engineering organization experiences the program as friction rather than capability, and begins routing around it: emergency releases, exceptions, shadow IT. The program ends up with lower security than the starting position because the bypasses are now institutionalized.

Tools without practice. The organization buys SAST, DAST, SCA, and IAST. The dashboards fill with findings. Nobody triages them, nobody remediates them, nobody tunes the rules. The tools become noise, then background noise, then muted noise. The budget was spent; the security posture did not improve.

Training without reinforcement. Annual developer training is completed, certificates are issued, and nothing in the day-to-day workflow reinforces the content. Six months later, the material is forgotten, and the code being written looks the same as it did before. Training without an ongoing loop — code review, pairing, microlearning, incident retrospectives that cite curriculum content — is an expensive one-time event rather than a capability-building program.

Each failure mode has a recovery path, but the recovery is harder than doing the rollout well the first time. The rollout principles above are specifically designed to avoid landing in these patterns.

The SDLC Works Only If Your Developers Can Do the Work

A secure SDLC on paper, without developers who can recognize vulnerabilities in their own language and fix them at PR velocity, produces the pattern every organization hits: tools that find findings nobody closes. SecureCodingHub builds the developer capability that turns the SDLC from a policy document into operational reality — language-specific, hands-on secure coding training for every phase of the implementation and verification work. If you are rolling out (or rebuilding) a secure SDLC in 2026 and want the developer side to land, we are happy to walk your team through how our program integrates.

See the PlatformThe Maturity Arc: Where Most Organizations Actually Are

If you benchmark an engineering organization against a SAMM or BSIMM framework in 2026, the distribution of maturity levels is remarkably consistent regardless of industry, size, or stated policy. The shape is worth understanding because it calibrates expectations.

The median organization is at SAMM level 1-2 in most practices: some activities present, not systematically applied, limited measurement. The high-performing 10% are at level 2-3: systematic practices, integrated tooling, measurable outcomes. The bottom 10% are at level 0-1: ad-hoc activities, minimal measurement, compliance-driven rather than risk-driven. The distribution does not change much year-over-year; what changes is the set of organizations in each cohort, as mature programs regress under leadership changes and immature programs advance when they find the right leader.

The arc for a specific organization from median to high-performing typically takes two to four years of sustained investment — not because the technical work is that large, but because the cultural shift is. Engineering organizations absorb change at a rate the surrounding business culture allows, and most businesses absorb process change in the five-to-fifteen-percent-per-quarter range. Organizations that attempt faster transitions tend to burn out the security leadership that drove them.

The practical implication for a program leader: pick a realistic three-year plan, staff the leadership for that duration, protect the plan from quarterly budget reviews that would otherwise compress it, and measure outcomes against the plan rather than against idealized targets. The SDLC frameworks are aspirational references; the actual trajectory is organizational.

Closing: Secure SDLC Is the Process That Produces Secure Software

There is no substitute for a working secure SDLC. Point tools do not substitute. Compliance documentation does not substitute. Annual training events do not substitute. External penetration tests do not substitute. The substitute that feels like the substitute — "we have a strong security team" — is the most dangerous, because a strong security team operating on a weak development process produces secure software through heroics, and heroics do not scale and do not survive leadership turnover.

What produces secure software consistently, at scale, through leadership changes and technology transitions, is a software development process in which security is a property of each phase rather than an activity appended to the end. That process — the secure SDLC — is not exotic in 2026. The frameworks are well-documented, the tools are mature, the practices are well-understood. The thing that is hard is the institutional discipline to adopt it, maintain it through the immune response, integrate it with the compliance obligations, and evolve it as the threat landscape evolves.

The organizations that do this well are not dramatically different from the organizations that do not. They have the same technologies, the same budgets, the same staffing challenges. What they have, that the others do not, is a set of institutional habits that treat security as part of building software rather than a separate activity. Those habits are built deliberately, one phase at a time, one team at a time, one release at a time. The framework you choose matters less than the habit you build. Pick the framework that makes the habit easier to sustain, and start with the phase where the next dollar invested produces the most risk reduction — which, for most organizations in 2026, is still the implementation phase's developer enablement work.