Most of the vulnerabilities that end up in bug bounty reports, penetration test findings, and post-incident reviews were visible at design time. Not visible in the code — visible in the whiteboard diagram before the code existed. Threat modeling is the engineering practice that catches those issues while they are still cheap: while the shape of the system is still a conversation, not a deployed service. This guide walks the frameworks engineering teams actually use in 2026 — threat model stride as the baseline, PASTA for risk-heavy systems, LINDDUN for privacy-dominated threat landscapes — the tooling (OWASP Threat Dragon, Microsoft Threat Modeling Tool, IriusRisk) and the cultural habits that separate teams that do threat modeling from teams that file it under "we should really start doing that."

What Is Threat Modeling?

Threat modeling is a structured analysis of a system's design to identify what can go wrong, who would make it go wrong, and what the system should do to prevent or detect it. The output is a short document — usually a diagram, a list of threats, and a list of mitigations — produced by the team that owns the design, ideally before implementation begins. The practice is old; the earliest recognizable form is Adam Shostack's work at Microsoft in the late 1990s, and the core mental model has not changed in the twenty-five years since: draw the system, mark the trust boundaries, walk each component and ask what an attacker could do to it.

What has changed is the level of organizational expectation. In 2026, threat modeling is referenced by name in NIST SSDF (PW.1.1), by OWASP SAMM as a distinct practice with maturity levels, by the Microsoft SDL as a phase activity, and implicitly by compliance frameworks that require secure design review. The EU Cyber Resilience Act requires a demonstrable design-phase security analysis for connected products sold in the EU market. PCI DSS expects documented design review for the cardholder data environment. The gap between organizations that do threat modeling and organizations that do not is no longer a matter of taste; it is increasingly a matter of audit exposure.

Where threat modeling sits in the lifecycle matters as much as whether it happens. It belongs in the design phase — after requirements are clear, before implementation starts, while the shape of the system is still being decided. Teams that try to threat-model after implementation get far less value because the design is already committed, the team's attention is on shipping, and the findings either get absorbed as technical debt or ignored outright. In the context of a secure software development lifecycle, threat modeling is the defining activity of Phase 2 (Design) and is what distinguishes a serious secure SDLC from a checklist-driven one.

STRIDE: The Developer-Friendly Framework

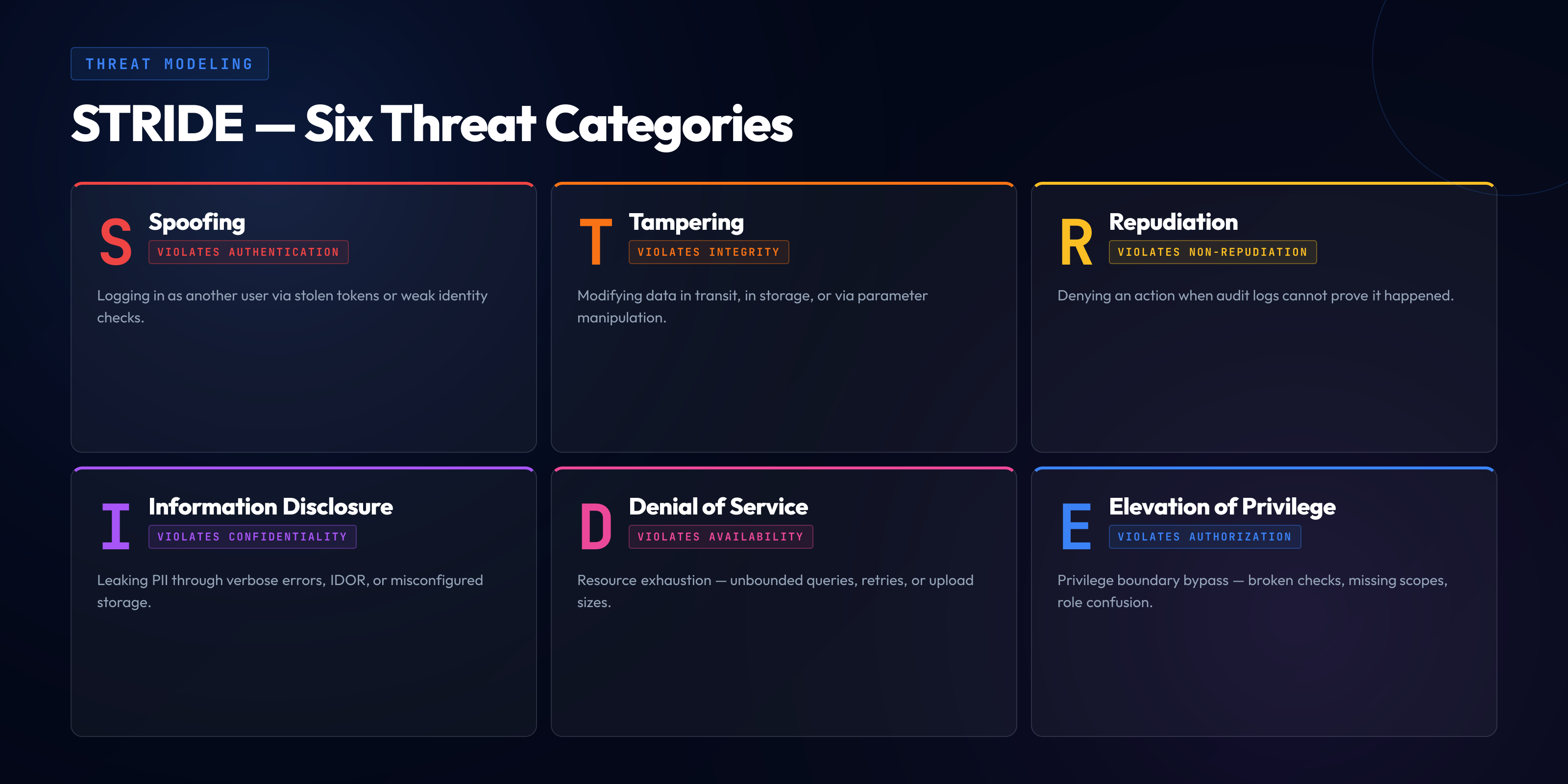

STRIDE threat modeling is the most widely adopted approach in 2026, and the reason is pragmatic rather than theoretical: STRIDE maps onto questions that developers can actually answer about their own designs. The acronym expands to six threat categories — Spoofing, Tampering, Repudiation, Information disclosure, Denial of service, Elevation of privilege — and each category corresponds to a question that is asked of each component in the design.

| Category | Attacker goal | Primary mitigation class |

|---|---|---|

| Spoofing | Pretend to be another user, service, or identity | Authentication |

| Tampering | Modify data in transit, in storage, or in memory | Integrity controls |

| Repudiation | Deny having performed an action | Logging and non-repudiation |

| Information disclosure | Read data the attacker is not authorized to see | Confidentiality / access control |

| Denial of service | Make the system unavailable to legitimate users | Availability controls |

| Elevation of privilege | Gain access or capability beyond what is authorized | Authorization |

The usefulness of the acronym is in the prompt it creates. Given a web service component, a developer who is not a security specialist may not spontaneously think "what are the attacks against this?" But when presented with STRIDE as a structured prompt — can this be spoofed, can this be tampered, can this be repudiated — the answers come more readily because each question is scoped. STRIDE's clean mapping from category to mitigation class is the second half of its value: identifying that a component has a spoofing threat almost always leads to an authentication question, and identifying an elevation-of-privilege threat leads to an authorization question. The framework teaches its own remediation pattern alongside its threat enumeration.

STRIDE is most often applied at the component or data-flow level rather than at the whole-system level. A data flow diagram shows the components (processes, data stores, external entities), the data flows between them, and the trust boundaries that separate zones of different privilege. STRIDE is then walked per-element — each process, each data store, each flow crossing a trust boundary gets the six-question treatment. This scales reasonably well because most components only have material threats in two or three of the six categories, and the walkthrough reveals which ones in a predictable way.

A STRIDE Walkthrough on a Sample App

The concrete mechanics of STRIDE are easier to convey on a worked example than in the abstract. Consider a modest e-commerce checkout service — a web frontend, an API gateway, a payment service, a database, and an external payment processor. The data flow crosses three trust boundaries: the internet boundary between the user's browser and the API gateway, the internal trust boundary between the API gateway and the payment service, and the external boundary between the payment service and the processor.

The API gateway — Spoofing. Can an attacker pretend to be a logged-in user? This is the authentication question. The mitigation class is session management: the gateway validates a session token on every request, the token is bound to a user identity, the token rotates on privilege change, and the token expires. If any of those are weak, spoofing is material. For a 2026 system, the mitigation typically is short-lived access tokens with refresh tokens bound to device fingerprints, and session invalidation on credential change.

The API gateway — Tampering. Can an attacker modify the request in flight or on the client side to change its effect? This is the integrity question, and it is where IDOR (insecure direct object reference) and parameter tampering vulnerabilities live. The mitigation class is server-side validation: every request identifier, every quantity, every price comes from the server's view of the session rather than from client-supplied parameters, and the server does not trust any client-controlled input to determine authorization. A checkout request that posts a price is a red flag; the server computes the price from the cart stored server-side.

The payment service — Information disclosure. Can an attacker who reaches the payment service read data they are not authorized to read? This is the classic confidentiality question. The mitigation class includes encryption at rest and in transit, access control on the data store, tokenization of cardholder data so that the primary account number is replaced with a token the payment service can use but the broader application cannot read, and logging discipline that never emits sensitive fields. For teams operating under PCI DSS, the tokenization requirement is explicit and the operational consequence is that scope reduction — fewer services that actually touch PAN — is a primary architectural driver.

The external boundary — Tampering and Information disclosure combined. Can an attacker on the network between the payment service and the processor intercept or modify the request? The mitigation is TLS, with current algorithm selections and pinned certificates where supported. In 2026, the default assumption is TLS 1.3 with modern cipher suites and HSTS preload; anything less is a design defect.

The database — Repudiation. If an internal user queries or modifies payment records, can the action later be denied? The mitigation class is audit logging: every privileged action logs actor, timestamp, target, and result to an append-only store that the actor cannot modify. For compliance purposes the log retention is typically a year minimum, and the log store is segregated from the primary database so that a compromise of one does not compromise the other.

The database — Denial of service. Can an attacker send queries that exhaust database resources? The mitigation class includes rate limiting at the gateway, query timeouts at the database, read replicas for expensive read paths, and connection pooling limits that prevent a bad actor from starving other users. In practice, denial-of-service threats at the application layer are underrated in threat models because they do not produce dramatic breach headlines; they produce slow degradation that costs the business revenue rather than headlines.

The payment service — Elevation of privilege. Can a caller with limited privileges invoke operations they should not reach? The mitigation class is authorization: every endpoint checks the caller's privileges against the required privileges for the operation, the privilege model is centralized rather than re-implemented per-endpoint, and the default behavior on ambiguous authorization decisions is denial. Broken access control is, in OWASP Top 10 rankings, the most frequently reported vulnerability class year after year; the elevation-of-privilege question in STRIDE is where it gets caught at design time.

The output of a 60-90 minute walkthrough on this sample is typically a one-to-two page document: the diagram, a numbered list of threats identified, the mitigation for each, and the owner for any mitigation that is not already in place. That document is the threat model. It is revisited when the design changes, revisited again after pentest or production incidents inform the list, and used as input to the code review checklist during implementation.

PASTA: The Risk-Centric Alternative

PASTA — Process for Attack Simulation and Threat Analysis — is a seven-stage framework that replaces STRIDE's per-component enumeration with a risk-oriented flow from business objectives through threat and vulnerability analysis to risk-weighted countermeasure selection. It is materially heavier than STRIDE and is applied in different circumstances: typically on systems with high risk exposure (payment platforms, authentication infrastructure, identity providers, systems handling restricted data) where a quantitative risk view is worth the effort.

The seven stages run, in order: Define the objectives (business context, compliance drivers, risk tolerance). Define the technical scope (the system boundaries, technologies, dependencies). Application decomposition (data flows, trust boundaries, components). Threat analysis (threat intelligence, likely attacker profiles, historical incidents). Vulnerability analysis (weakness identification, mapping to known CVE classes). Attack modeling (attack trees, attack chains, likelihood scoring). Risk and impact analysis (business impact in dollars or outage hours, risk prioritization, countermeasure selection).

The defining property of PASTA relative to STRIDE is the explicit treatment of business risk as the organizing principle, which puts PASTA closer in spirit to a system-up cybersecurity risk assessment than to a per-component threat enumeration. STRIDE treats all threats roughly equally and relies on the team's judgment to prioritize; PASTA forces an explicit estimation of business impact and attacker capability for each threat and drives countermeasure selection from that estimation. The tradeoff is cost — a PASTA engagement on a large system is measured in weeks, not hours, and typically involves threat intelligence research rather than just design review. The return is that the output is legible to business leadership in a way that STRIDE output is not, which matters when the threat model is feeding a capital investment decision rather than a sprint backlog.

Among threat modeling frameworks, the practical pattern in most 2026 engineering organizations is STRIDE as the default for sprint-scale work by feature teams, with PASTA reserved for the handful of systems where the risk exposure justifies the weight. A team that tries to apply PASTA to every feature will produce threat models too slowly to keep pace with shipping; a team that tries to apply STRIDE to a billion-dollar payment platform will undersell the risk exposure.

LINDDUN for Privacy-First Systems

LINDDUN is the threat modeling framework for systems where privacy threats dominate the risk profile — personal data processing under GDPR, CCPA, health records under HIPAA, or any context where unauthorized linkage or identification of individuals is the primary concern. The acronym expands to: Linkability, Identifiability, Non-repudiation (as a privacy negative, not the security positive), Detectability, Disclosure of information, Unawareness, Non-compliance.

The framing distinguishes LINDDUN from STRIDE in an important way. STRIDE's non-repudiation category treats "the attacker can deny having done something" as a threat; LINDDUN's non-repudiation category treats "the system forces the user into a permanent record they cannot repudiate" as a threat, because privacy regimes explicitly grant users rights to limit the persistence of their data. Linkability — whether two pieces of data can be connected to the same individual — has no direct STRIDE analogue because it is not a traditional security property, but it is a first-class privacy concern. Identifiability — whether anonymized data can be re-identified — has the same character.

LINDDUN is most useful when applied alongside STRIDE on systems that have both security and privacy dimensions. A health-records platform has STRIDE threats (attackers trying to read records they shouldn't) and LINDDUN threats (the system over-collecting data, allowing linkage across records, or preventing user data deletion). Applying either framework in isolation misses half the threat landscape. The 2024 LINDDUN update (LINDDUN GO) tightened the methodology and produced a lightweight card-based variant that makes it easier to integrate alongside STRIDE without doubling the time cost of the session.

How to Run a Threat Modeling Session

The threat modeling session is where the framework becomes an actual team activity. The mechanics that make it work are consistent across frameworks; the goal is to produce a useful threat model in one session rather than aspire to completeness over many.

Scope the session to a feature, not a product. A threat model for "the whole checkout system" will either take a week or be shallow. A threat model for "the new guest-checkout feature" is bounded and completable in 60-90 minutes. Scope the session to a feature or subsystem that is small enough to draw on one whiteboard and discuss in ninety minutes.

Start with the diagram. Draw a data flow diagram showing the components, the data flows between them, the trust boundaries (dashed lines), and the sources of input. The diagram is the shared reference for the rest of the session; a session without a diagram devolves into parallel conversations about different mental models of the system.

Walk the framework per element. For each component, walk STRIDE (or your chosen framework) and ask each question. Record the threats that are real for this component; record the mitigations that exist or are needed. Do not try to identify every possible threat; identify the material threats for this component given the context.

Capture mitigations as owned actions. Every threat that is not already mitigated becomes an owned action — a person, a ticket, a target sprint. Threat models that produce threat lists without owned mitigations decay into compliance artifacts; threat models that produce tracked actions drive real risk reduction.

Include the right people. The feature's engineering lead, at least one other engineer who worked on the design, a security engineer or champion, and — if feasible — a product manager or designer who can speak to abuse cases from the user perspective. Sessions without a security voice tend to miss threats outside the team's usual attention; sessions without engineering voice produce threat models that do not reflect the real design.

Treat the output as a living document. The threat model is revised when the design changes, when new threats emerge (new attack patterns in the wild, new vulnerabilities in dependencies), when incidents inform the threat landscape, or when a pentest produces findings the model missed. A threat model that is not revised is stale within a quarter of material system change.

Threat Modeling Tools Compared

The tooling landscape in 2026 ranges from free open-source drawing tools to enterprise-priced automation platforms. For most engineering teams, the right tool is the simplest one that produces a diagram the team can read and an artifact that can be version-controlled alongside the code. Complexity in tooling often correlates inversely with adoption.

OWASP Threat Dragon. The most adopted open-source threat modeling tool in 2026 and the one to evaluate first. It runs as a desktop app or in the browser, supports STRIDE, LINDDUN, and CIA-based modeling, stores threat models as JSON in a git repo, and produces diagrams that are legible both inside and outside the tool. The owasp threat dragon project is actively maintained and has reached a maturity level that makes it viable for production use across engineering teams. The file-based model-as-code approach plays well with modern engineering workflow — threat models ship with the code they describe, reviewed in pull requests like any other artifact.

Microsoft Threat Modeling Tool. The longest-standing free option, tightly integrated with STRIDE and the Microsoft SDL. Its strength is the built-in threat library — given a diagram, the tool generates a pre-filled list of STRIDE threats for each element based on its type. Its weakness is that it is Windows-only and its file format has not evolved toward the model-as-code conventions. In 2026, most teams that still use the Microsoft Threat Modeling Tool do so because they have existing threat models in that format; new adoption tends to go to Threat Dragon.

IriusRisk. A commercial platform that automates threat modeling by analyzing architecture descriptions, generating threat lists from pattern libraries, mapping them to compliance framework requirements, and integrating with the developer workflow through Jira and CI/CD connectors. The value proposition is scale — organizations with dozens of services and hundreds of features produce threat models too frequently for a manual tool to keep up, and the automation pays for itself. The weakness is the usual weakness of automated analysis: the tool produces a generic threat list that must still be curated against the actual design, and teams that treat the output as the threat model rather than the starting point produce low-quality models.

SD Elements and ThreatModeler. Two commercial alternatives in the same space as IriusRisk, with similar value propositions and similar limitations. The choice among them typically comes down to existing vendor relationships, integration with the organization's issue tracker, and the quality of the threat library for the organization's technology stack.

Automating Threat Modeling in CI/CD

The aspiration to automate threat modeling in the CI/CD pipeline is a recurring theme in secure SDLC conversations, and it is worth being honest about what automation can and cannot do in 2026. Fully automated threat modeling — in the sense of "the pipeline generates the threat model from the code and all the team does is review it" — does not exist at useful quality. What exists is pipeline integration of the outputs and inputs of manual threat modeling: the threat model file is versioned in the repo, changes to it are reviewed in pull requests, threats are tracked as tickets, and mitigations are verified by SAST/DAST/IAST tools in CI.

Model-as-code. The threat model lives in the repo as a JSON or YAML file (Threat Dragon, pytm, threagile all support this pattern). Changes to the design diagram and the threat list go through pull-request review like any other code change. Stale threat models are surfaced by a CI check that warns when the model has not been updated since a design-touching file was last changed.

Threat-to-test mapping. Each threat in the model references the tests that verify the mitigation. A threat "API endpoint X is vulnerable to IDOR" maps to a specific integration test that attempts the IDOR and asserts it is rejected. The CI pipeline runs the tests, and if a test linked to an open threat fails, the build fails. This is where automation actually changes the economics of threat modeling: the model becomes an executable contract rather than a document.

Threat library integration. When a new component type is added to the design (for example, a new NoSQL database, a new third-party auth provider), the threat library produces a starter threat list based on the component type. The team reviews and refines, but does not start from blank. Tools like IriusRisk and threagile support this pattern out of the box; teams using Threat Dragon often build a small internal library of starter templates.

Vulnerability feed integration. The threat model is re-evaluated when a CVE emerges in a dependency referenced in the model. This closes the loop between threat modeling and vulnerability management — the vulnerability scan finding in a dependency automatically surfaces in the threat model of the components that use that dependency, and the team is prompted to re-review.

These integrations are worth the effort because they turn threat modeling from a one-time activity into a property of the codebase. But they do not substitute for the design-phase session with the team. The automation carries the model forward; the humans produce the insight that makes the model useful in the first place.

Threat Models Are Only as Good as the Developers Who Build Them

The framework matters less than the team's fluency with the threats the framework enumerates. A developer who has never built an authentication system will struggle to identify spoofing threats in one; a developer who has never handled cardholder data will struggle to identify information-disclosure threats in a payment flow. SecureCodingHub builds the language-specific, threat-class-specific fluency that makes threat modeling sessions produce real findings rather than performative ones. If you are introducing threat modeling as a team practice and want the developer side to land, we would be glad to show you how our program wires into that work.

See the PlatformCommon Threat Modeling Anti-Patterns

The failure modes of threat modeling programs cluster into a small set of recognizable patterns. Each of them is preventable, but only if the team names the pattern and avoids it deliberately.

The checklist trap. The team runs through STRIDE mechanically, produces a list of threats that reads like a textbook extraction, and marks each one "mitigated by existing controls." The resulting model adds no value because no real analysis happened — the team went through the motions without engaging with the specifics of the design. The recovery: insist that every threat identified has a concrete instance — a specific request, a specific data flow, a specific component interaction — not a generic category label.

The security-team-only model. The threat model is produced by the security team on behalf of the engineering team, without the engineering team in the room. The model is accurate in the sense of correct threat identification but useless in the sense of changing engineering behavior — the engineering team does not internalize the threats, does not take ownership of the mitigations, and returns to the same design patterns that produced the threats. The recovery: the engineering team runs the session with security support, not the other way around.

The documentation artifact. The threat model is produced, filed in a compliance repository, and never referenced again. Six months later, the design has changed substantially and the threat model is stale. Nobody notices because nobody uses the model for anything. The recovery: wire the model into the code review checklist, the design review process, and the ticket backlog so that it is referenced in the work of shipping features rather than reserved for audits.

The scope that swallows the session. The team decides to threat-model "the entire platform" in one session and produces either a two-week effort or a shallow ninety-minute overview. Either outcome is worse than multiple focused sessions on specific subsystems. The recovery: scope threat modeling sessions to individual features or bounded subsystems; run them frequently rather than rarely.

The framework over-commitment. The team picks PASTA because it is the most rigorous framework available and spends six weeks producing a threat model for a three-day feature. The rigor is out of proportion to the risk. The recovery: default to STRIDE for sprint-scale work, reserve PASTA for systems where the risk exposure justifies the weight, and recognize that the right framework is the lightest one that identifies the material threats.

The mitigation that never lands. Threats are identified, mitigations are agreed, owners are assigned — and then the mitigations are never actually implemented because they are deprioritized against feature work. The threat model becomes a record of things the team knows are broken but has not fixed, which is worse than not having a threat model because it creates explicit knowledge of unmitigated risk. The recovery: make mitigation work visible in the engineering backlog with the same status tracking as feature work, and protect a fraction of sprint capacity for it.

Threat Modeling and the Developer Training Question

The quality of threat modeling output correlates directly with the security fluency of the developers in the room. A team that has not been trained in the vulnerability classes STRIDE enumerates will produce shallow threat models regardless of how well the framework is applied. This is the connection point between threat modeling as a practice and developer training as an investment.

The training that matters for threat modeling is not generic security awareness. It is language-specific and threat-class-specific — the developer needs to recognize what an injection vulnerability looks like in their framework, what an IDOR vulnerability looks like in their API layer, what a cryptographic misuse looks like in their stack. Generic training that teaches "watch out for injection" produces developers who know to worry about injection in the abstract but cannot identify specific injection patterns in their own code at design time. For teams operating under PCI DSS, the specificity requirement is formalized in Requirement 6.2.2, which prescribes language-specific, role-relevant training; teams outside of PCI scope benefit from the same discipline as a productivity investment rather than a compliance obligation.

The second connection point is code review. A threat model that is produced but not enforced during code review produces no risk reduction. The review discipline that enforces threat model findings — structured secure code review with a checklist tied to the organization's threat model — is where the design-phase insight becomes implementation-phase behavior. Teams that skip this connection get threat models that identify the right threats but code that implements them anyway.

Closing: Threat Modeling Is the Cheapest Bug Prevention You Can Buy

The economic case for threat modeling is not contested in the security literature and has not been for a decade. A vulnerability identified at design time costs a fraction of what the same vulnerability costs post-deployment, and the ratio is large — order-of-magnitude rather than marginal. The reason organizations nonetheless underinvest in threat modeling is not disagreement with the economics; it is that the activity requires focused human attention at a point in the lifecycle when the pressure is to ship, not to analyze. The same design review session that costs a feature team ninety minutes prevents bugs that would cost the same team days of remediation later, but the ninety minutes is felt immediately and the avoided days are hypothetical.

The organizations that build durable threat modeling practice share a small set of habits: they scope sessions to features rather than products, they use STRIDE as the default and reserve heavier frameworks for high-risk systems, they include engineers rather than just security specialists, they treat the threat model as a living artifact rather than a compliance deliverable, and they wire the output into code review so that the design-phase insight reaches the implementation. The tools matter less than any of these habits. OWASP Threat Dragon and a markdown file in the repo can support a world-class threat modeling practice; IriusRisk and a full commercial tooling stack can support a performative one.

The thing to get right first is not which framework, which tool, or which automation pipeline. It is the institutional habit of asking, before implementation begins, what could go wrong with this design. That habit — applied consistently, supported by developers who can recognize the threats when they appear, and enforced in the review discipline that comes next — is what prevents the vulnerabilities that would otherwise end up in the next bug bounty report, pentest finding, or post-incident review. Every other activity in the secure software development lifecycle is either cheaper because threat modeling caught the issue early, or more expensive because it did not.