Code review has long been considered a quality gate for catching logic errors and enforcing style conventions. But for security teams, it's one of the most cost-effective methods for identifying vulnerabilities before they ever reach production. The challenge is doing it consistently, efficiently, and at scale.

Most organizations treat security and code review as separate processes. Developers review each other's code for functionality and readability, while security teams run automated scans on a separate cadence. This disconnect means security issues slip through — not because nobody looked, but because nobody looked for the right things.

Why Security-Focused Code Review Matters

Static analysis tools are valuable, but they produce noise. They catch patterns — hard-coded credentials, known vulnerable function calls, missing input validation — but they miss business logic flaws, authorization gaps, and subtle injection vectors that depend on context.

Human reviewers bring context awareness that tools lack. A reviewer who understands the application's data flow can spot an IDOR vulnerability that no scanner would flag. They can identify race conditions in checkout flows, privilege escalation paths through indirect object references, and authentication bypasses hidden in edge-case handling.

Building a Security Review Checklist

The biggest mistake teams make is asking reviewers to "look for security issues" without guidance. Without a concrete checklist, reviews become inconsistent. One reviewer might focus on SQL injection while ignoring authorization, and another might check headers but miss deserialization risks.

An effective security review checklist covers these critical areas:

- Authentication and authorization — Does every endpoint verify the user's identity? Are role-based access controls enforced at the API layer, not just the UI?

- Input validation and output encoding — Is user input validated on the server side? Is output properly encoded for its context (HTML, SQL, JSON, shell)?

- Data exposure — Are API responses returning only the fields the client needs? Are sensitive fields like passwords, tokens, or internal IDs being leaked?

- Error handling — Do error responses avoid leaking stack traces, database schemas, or file paths? Are errors handled gracefully without fail-open conditions?

- Cryptography — Are secrets stored securely? Are deprecated algorithms (MD5, SHA1 for passwords, ECB mode) being used where modern alternatives exist?

The Two-Phase Approach

We've found that the most effective secure code review follows a two-phase model — and it's the same model we use in SecureCodingHub's practice challenges. For the foundational framing of what "secure code" actually means in this context, see our what is secure code — definition and examples guide:

Phase 1: Identify the vulnerability

Before jumping to fixes, train your reviewers to find the issue. Read the code as an attacker would. What inputs does this function accept? Where does the data flow? What assumptions is the developer making about trust boundaries?

Phase 2: Verify the fix

Once a vulnerability is identified, the fix matters as much as the finding. A parameterized query prevents SQL injection — but did the developer also add input length validation? Did they escape for the right context? Is the fix applied consistently across similar patterns in the codebase?

Consider this common example. A developer writes a database query that's vulnerable to SQL injection:

// Vulnerable: user input directly interpolated into query

const query = `SELECT * FROM users WHERE email = '${req.body.email}'`;

const result = await db.execute(query);

// Secure: parameterized query separates code from data

const query = 'SELECT * FROM users WHERE email = ?';

const result = await db.execute(query, [req.body.email]);In a review, catching the interpolation is Phase 1. Confirming the parameterized version is correct — and checking that the same pattern isn't repeated elsewhere — is Phase 2.

Scaling Secure Reviews Across Large Teams

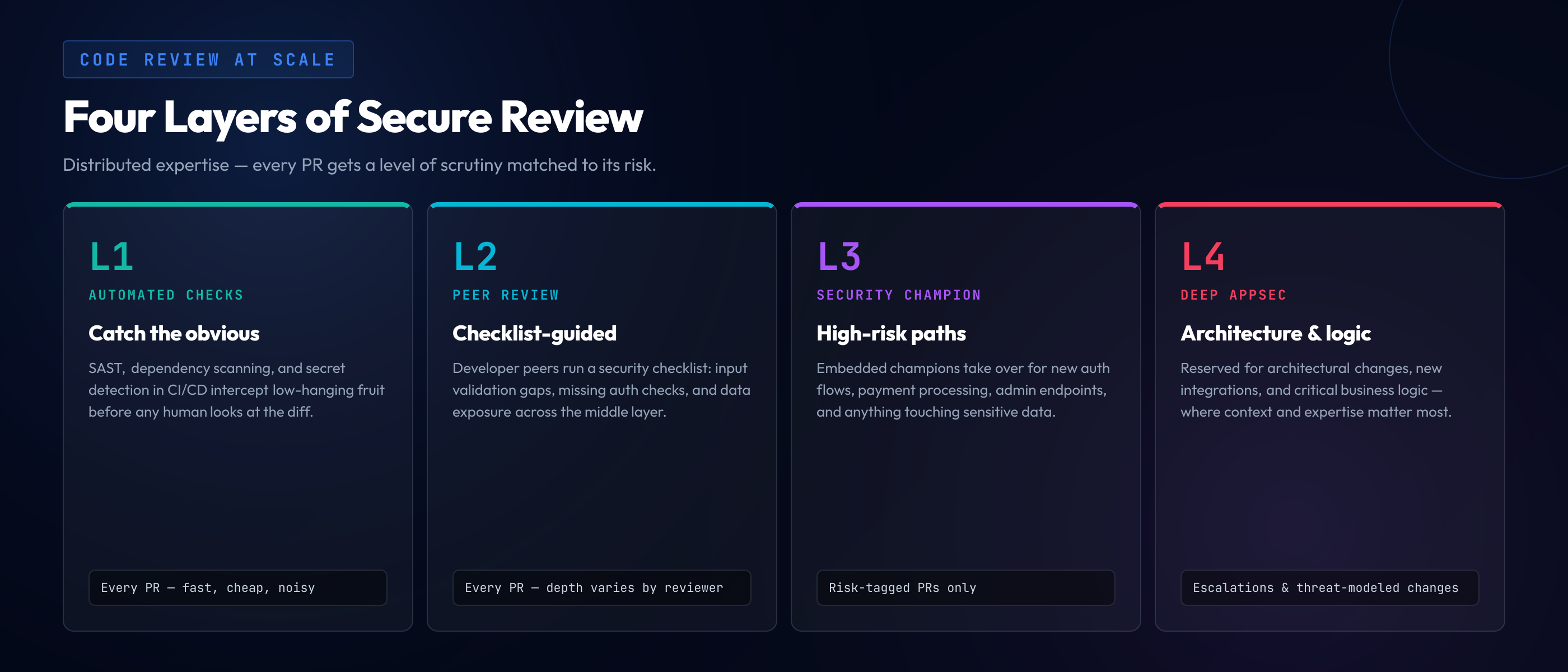

For organizations with hundreds of developers, every pull request can't receive a deep security review from a dedicated AppSec engineer. The math doesn't work. Instead, scale through layers:

"Security expertise should be distributed, not centralized. Every team should have someone who can catch the common 80% — and know when to escalate the remaining 20%."

- Automated checks as the first gate — SAST, dependency scanning, and secret detection in CI/CD catch the low-hanging fruit.

- Developer peer review with a security checklist catches the middle layer — input validation gaps, missing auth checks, and data exposure.

- Security champion review for high-risk changes — new auth flows, payment processing, admin endpoints, and anything touching sensitive data.

- Deep AppSec review reserved for architectural changes, new integrations, and critical business logic — the changes where context and expertise matter most.

Making It Stick

The hardest part isn't building the process — it's maintaining it. Security review quality degrades when developers don't understand why they're checking for specific issues. A checklist without context becomes a checkbox exercise. The cost of a single review miss can be enormous when the code path is privileged — the PHP git.php.net backdoor incident is a useful case study: two attacker-pushed commits planted a hidden RCE behind a User-Agent check in the language that runs 70% of the web, and the chain held until a third reviewer noticed the commits had not gone through the project's normal review path.

This is where hands-on training makes a difference. When a developer has personally exploited a SQL injection in a practice environment, they recognize the pattern in code review without needing a checklist reminder. When they've walked through an IDOR scenario step by step, they instinctively check authorization logic in pull requests. That reflex matters even more when the diff was generated by Copilot or Cursor — the specific patterns to scan for in AI output are covered in our code security training checklist for AI-generated code.

The most secure codebases aren't built by security teams — they're built by developers who've internalized security thinking into their daily workflow. Code review is where that thinking gets reinforced, one pull request at a time.