Most pull requests in 2026 contain code no human wrote from scratch. Copilot, Cursor, Claude Code, Codeium, Windsurf — some combination of these tools produced at least part of the diff in front of you. The hard truth that the industry is slowly internalizing is that reviewing AI-generated code is not the same activity as reviewing human-written code, and the review habits most teams built over the last decade are not calibrated for the new failure modes. This post is a concrete, category-by-category checklist for how to run security review on AI-generated code — what to look for, where the models systematically fail, and how to structure code security training so that every engineer on your team can run this checklist in a pull request review without thinking about it.

Why AI-Generated Code Needs a Different Review Lens

A 2023 Stanford study found that developers using AI coding assistants produced less secure code than developers without them — and crucially, reported higher confidence in the security of what they shipped. That result has been replicated in subsequent research through 2024 and 2025 in different forms. The pattern is consistent: the output looks right, the model sounds authoritative, the developer's threat-detection reflex is quieter than it would have been if they had typed the same lines themselves. The productivity gain is real. So is the security regression.

The reason this happens has nothing to do with the models being malicious or broken. It has to do with what LLMs actually optimize for. A code generation model is trained to produce output that statistically resembles the training distribution of "code that solves the requested problem". Security is a property that distinguishes code solving the exact same problem in two different ways, and it is underrepresented in the training distribution because most public code on GitHub is not written with security rigor in mind. The model happily emits a SQL query built with string concatenation because it has seen that pattern ten thousand times in tutorials and answers. It does not know — in the sense of having the security context as a load-bearing part of its reasoning — that the same query written with parameters is materially safer.

This creates a review situation that is qualitatively different from reviewing a teammate's code. A human teammate writing string-concatenated SQL typically has one of three mental states: they are junior and have not learned the lesson, they are experienced and have a reason they will explain in the PR, or they have made a mistake. The reviewer's job is to identify which case it is. An LLM writing string-concatenated SQL has none of those mental states. It pattern-matched to an output distribution, and the output was fluent. There is no "reason they will explain", no "they will learn", no internal model to correct. The code is just what the model generated, and the review has to catch everything every time, because the model will do it again next time.

That shift is what makes systematic code security training central to an AI-accelerated engineering organization. It is no longer enough for a few senior engineers to be the ones who catch issues during review. Every engineer shipping AI-generated code is the last line of defense on that specific diff, and the pace of generation means the volume of code flowing through review is higher than any review bottleneck can handle if the checking has to be done by a handful of security-aware reviewers.

What Actually Changes When an LLM Wrote the Code

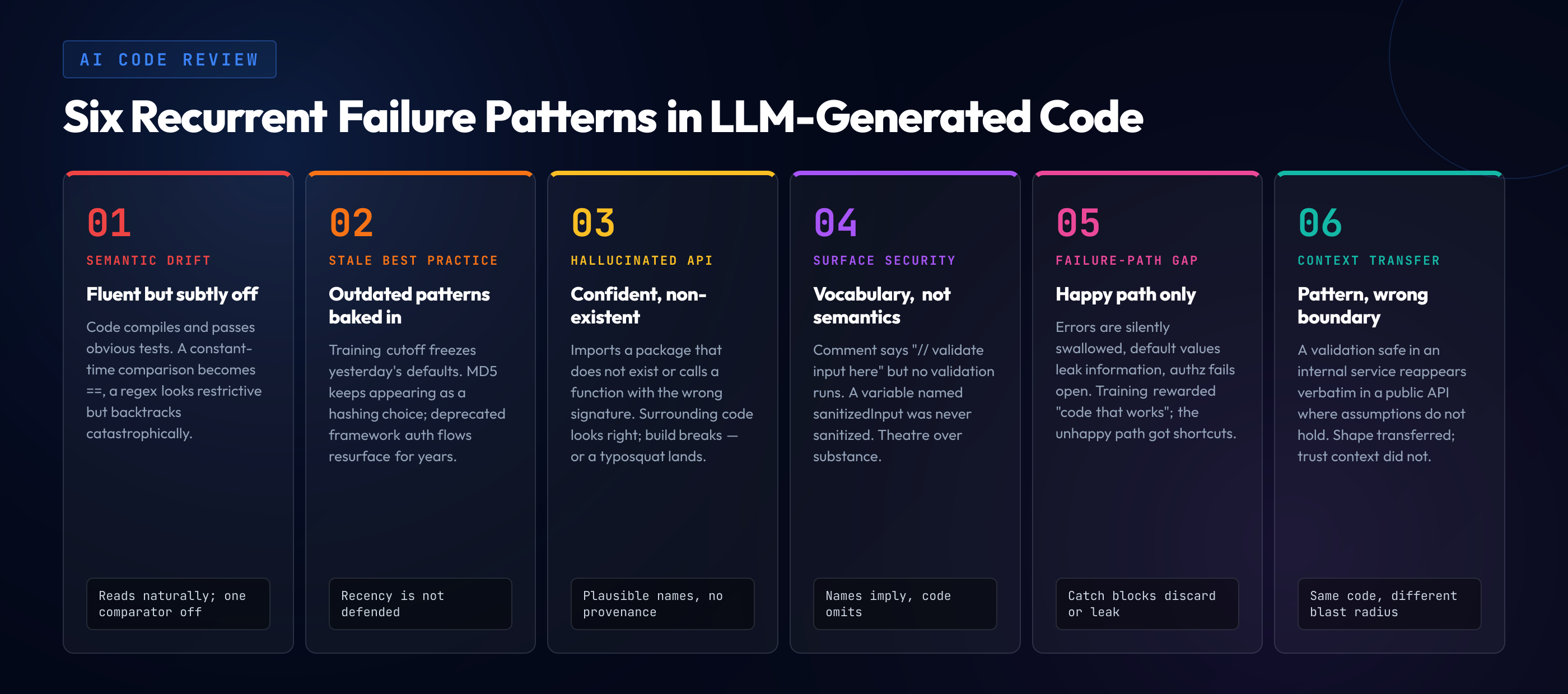

Before the checklist, it helps to name the recurrent failure patterns that AI-generated code exhibits. These are the patterns you will see again and again once you know to look for them. Each one is frequent enough to deserve dedicated attention in the review flow.

Surface fluency, semantic drift. The code compiles, runs, and passes obvious test cases. It does the right thing for the prompt. It also contains a subtle deviation from the secure version of the same logic — a comparison that uses `==` instead of a constant-time equality check, a validation that checks for the wrong sentinel value, a regex that looks restrictive but has a catastrophic backtracking case. These are the hardest issues to catch because the code reads fluently.

Outdated best practices baked in. Training data has a cutoff. A model trained primarily on code written before a specific library changed its default authentication mechanism will keep generating the old pattern for months or years after the ecosystem moved on. A model that learned MD5 from a million tutorials will keep offering MD5 as a hashing choice unless specifically redirected, even though the rest of the ecosystem has long since moved on. Recency is not a property the model defends.

Confident hallucination of APIs, packages, and functions. The model generates an import for a package that does not exist, a call to a function with the right name but wrong signature, or a configuration flag that was removed in the current version of the framework. If the surrounding code looks right, the hallucination passes unnoticed until the build breaks — or worse, until an attacker registers the hallucinated package name and waits.

Security considerations only at the surface. The model happily adds a comment saying `// Validate input here` but does not actually validate the input. It generates a variable named `sanitizedInput` that has not been sanitized. The security vocabulary is present without the security semantics.

Missing failure-path handling. The happy path is usually correct. The error path frequently silently swallows exceptions, returns default values that leak information, or fails open. LLMs prefer generating code that "works" — error handling is terrain where they take shortcuts.

Copy-paste shape from one context to another. The model applies a pattern that is appropriate in one trust boundary to a different trust boundary where the assumptions do not hold. A validation approach safe in an internal service gets reproduced in a public API where it is insufficient. The shape transferred; the security context did not.

Each of the checklist categories below is designed around these failure patterns. When you scan a pull request, you are not looking for every bug — you are looking for these specific patterns.

Checklist Part 1 — Injection and Input Handling

Injection flaws are the single highest-frequency failure category in LLM-generated code. Every category of injection — SQL, NoSQL, OS command, LDAP, XPath, template, prompt — shows up in model output regularly enough that the review checklist opens here. Work through every line in the diff that touches untrusted input and ask the specific questions below.

SQL and database queries. Does every query use the database driver's parameter binding? Look for string concatenation into SQL (`"SELECT * FROM users WHERE id = " + userId`), template literals with interpolated variables, and string formatting operators feeding into a query string. These patterns are never correct in AI-generated code. The fix is always to use placeholders and pass values as parameters. Also check for query fragments being built conditionally — a `WHERE` clause assembled from user-controlled conditions often loses the parameterization boundary somewhere in the middle.

OS command execution. Any call to `exec`, `spawn`, `system`, `Runtime.exec`, `subprocess.run` with `shell=True`, or the language-specific equivalents needs to be scrutinized. The model will often produce a shell string with interpolated user input. The fix is to pass arguments as an array and avoid the shell where possible. If the shell is required, every interpolated value needs to be validated against a strict allowlist, not "sanitized" by stripping characters.

Path handling and file access. User-supplied paths get joined with trusted base directories. The model frequently produces `path.join(baseDir, userInput)` without checking that the result stays within `baseDir`. This is the path traversal pattern. Every such join needs a `realpath` or equivalent normalization followed by a prefix check against the allowed base.

Template rendering. If the framework supports both auto-escaped and raw template rendering (Jinja2's ``, React's `dangerouslySetInnerHTML`, similar constructs), the model tends to reach for the raw variant when the quoting gets awkward. Flag any use of the raw rendering primitive and ask whether the data flowing in is genuinely trusted.

Prompt injection, if the product uses LLMs. If the code in the diff passes user-controlled strings into another LLM's prompt — or into a system prompt, or into tool descriptions, or into MCP context — the failure category is prompt injection. See the MCP Security post for the full threat model; for review purposes, ensure that user input into any prompt-adjacent position is either explicitly delimited, treated as untrusted throughout the prompt context, or not passed at all.

Regex validation. An input validation regex the model produced needs two checks. First, does it actually reject the malicious input it is supposed to reject? Models often generate permissive patterns. Second, does it have catastrophic backtracking? A `^(a+)+$`-shaped pattern can hang the process on specially crafted input. Regex-based validation is brittle enough that where there is a parser (URL, email, date), prefer parsing over regex.

Checklist Part 2 — Authentication and Authorization

After injection, auth is the second most frequent failure cluster. The pattern is that the model produces code that implements authentication in a way that looks right and authorization checks that are either missing, misplaced, or too narrow.

Authentication flow integrity. If the diff contains a login flow, password reset flow, MFA handling, or token issuance, treat every line as suspect. Models produce subtly broken versions of these flows — comparing password hashes with `==` instead of constant-time comparison, storing tokens in places they can be stolen (localStorage for sensitive session tokens, predictable cookies), returning different error messages for "user not found" and "wrong password" in ways that enable enumeration. The review question: is there a reason to write this flow from scratch, or should it delegate to a battle-tested library or identity provider?

Authorization checks at every boundary. Every route handler, every mutation, every access to a resource that belongs to a user needs an explicit authorization check. The model is notorious for generating handlers that check "is there a session" (authentication) but not "does this session's user actually own this resource" (authorization). Walk every controller or handler in the diff and, for each one, ask: who can invoke this? What identity does the code check against what resource?

Broken object level authorization (IDOR). A classic AI-generated pattern is `db.findById(req.params.id)` with no check that `req.params.id` belongs to the current user. This is the OWASP A01 category, still the most-reported class in real-world findings. Every lookup by a user-controlled identifier needs an ownership check.

Privilege escalation surfaces. Endpoints that change user roles, permissions, or organization membership need explicit privilege checks on the invoker. Models often generate these endpoints as if they were normal update operations, checking only that the caller is authenticated, not that they have the privilege to change the target role.

JWT and token handling. If the code issues or consumes JWTs, verify that signature verification is explicit, the algorithm is pinned (preventing the `alg: none` attack), the audience and issuer are checked, and expiration is enforced. Model-generated JWT code routinely ships with signature verification disabled or permissive.

Session management. New session creation after authentication (to prevent session fixation), secure and httponly cookie flags in production, reasonable session lifetimes, and explicit session termination on logout. These are patterns that battle-tested frameworks handle correctly if configured — the failure is usually in custom auth code the model produced because the prompt asked for "session handling".

Checklist Part 3 — Cryptography and Secrets

Cryptography is a category where model output is confidently wrong. The model will emit crypto code that looks authoritative, uses recognizable APIs, and is substantively broken. This is the single category where deferring to well-known, reviewed libraries pays the highest dividend — and where the model's tendency to recreate primitives from scratch causes the most damage.

Deprecated algorithms. MD5 and SHA-1 for anything security-sensitive (password hashing, integrity verification) are immediate flags. DES, 3DES, RC4 for encryption are immediate flags. Unpadded RSA, ECB mode for block ciphers, CBC without integrity protection — flag and redirect to an AEAD construction (AES-GCM, ChaCha20-Poly1305).

Password hashing. The only acceptable password hashing primitives are bcrypt, scrypt, argon2 (argon2id preferred), or PBKDF2 with high iteration counts. Hashing passwords with SHA-256 — even salted — is a frequent AI-generated mistake because the model has seen "hash the password" patterns in the training data. The correct primitive depends on slowness and memory hardness, not on being a hash function.

Random number generation. Cryptographic randomness comes from the language's dedicated CSPRNG (`crypto.randomBytes`, `secrets.token_bytes`, `SecureRandom`). Use of `Math.random`, `rand()`, or language-level PRNGs for tokens, session IDs, or cryptographic nonces is a bug. Models sometimes emit these interchangeably.

Hardcoded secrets. API keys, database passwords, JWT signing secrets, private keys embedded in source. Searchable by pattern in review, catchable by scanners in CI, and still one of the most common AI-generated issues when the prompt includes example values.

Key management. Keys loaded from environment variables in a way that is consistent with the deployment's secret management. Keys with appropriate scope and rotation policy. No key material ever logged. The model will cheerfully log a full request dump that includes the bearer token.

TLS and transport. Client code that disables certificate verification (`verify=False`, `rejectUnauthorized: false`, `TrustManager` accepting all) to make a local test work, then ships to production with the setting intact. Every `verify` or equivalent flag in the diff needs justification.

Checklist Part 4 — Dependencies and Supply Chain

Supply chain risks in AI-generated code have two flavors. The first is the classic: the model pulls in a dependency with known vulnerabilities. The second is newer and more dangerous: the model hallucinates a package name that does not exist, an attacker registers the name, and the next time the code is installed, malicious code lands in the build.

Hallucinated package names. Before a pull request merges, every new dependency added to the manifest has to be verified to actually exist, actually correspond to what the code imports, and actually be the package the team intends to use. The failure mode is that a model generates `import { foo } from "some-utility-lib"` and adds `"some-utility-lib"` to `package.json`. The package may not exist — or worse, someone may have registered it as a typosquat after a prior model generated the same hallucinated name. Every new dependency row gets inspected.

Version pinning. The model tends to emit version ranges that allow automatic upgrades (`^x.y.z`, `~x.y.z`). For security-critical packages, lockfile pinning is the minimum bar, and for high-risk direct dependencies, exact version pinning with explicit update cadence is safer. The decision depends on the team's supply chain posture, but the model's default tends to be more permissive than most teams should accept.

Known vulnerabilities. SCA tooling in CI catches dependencies with known CVEs, but the feedback arrives after the PR is open. The review should cross-reference any new dependency version against the SCA output and treat a high-severity finding as a merge blocker, not a "fix in follow-up".

Trust provenance. For packages outside the well-known ecosystem — niche libraries, small-maintainer packages, packages with recent ownership changes — the review should ask whether the package's provenance is acceptable. A package published two weeks ago by a maintainer with no other published work is not the same risk as a mature package from an established organization.

Direct imports of transitive dependencies. Models sometimes import directly from a transitive dependency because they saw the export in training data, bypassing the intended API of the parent package. This creates a brittle coupling to the transitive's internal structure. Flag and redirect to the parent package's public API.

Checklist Part 5 — Error Handling, Logging, and Information Disclosure

The failure path is where AI-generated code is weakest. The happy path has been optimized heavily in training; the unhappy path has received far less attention. Three recurrent failure patterns in this category.

Exception handlers that swallow information. A `try/catch` block that catches a broad exception type, logs the exception, and returns a generic error response is usually correct. A `try/catch` that catches and silently discards, or returns the exception message verbatim to the client, is not. The model produces both patterns interchangeably. Silent discard masks real failures. Verbatim return leaks stack traces, SQL query text, internal path names, or version identifiers to an attacker who probes with malformed input.

Logs that contain sensitive data. Request body logging that includes passwords and tokens, error logging that dumps full user objects including hashed passwords, access logs that include session IDs in URLs. The review has to read every log statement and ask whether the data being logged is safe to land in a system where log access is broader than database access — which, in most organizations, it is.

Fail-open authorization. A common pattern: the authorization check throws an exception on an unexpected condition, the exception is caught by a generic handler up the stack, and the request proceeds as if the check succeeded. The correct pattern is to fail closed — if the authorization decision cannot be made confidently, the request is denied. Models do not consistently produce fail-closed code because the happy-path optimization biases toward continuing execution.

Rate limiting and abuse controls. Authentication endpoints, password reset endpoints, any endpoint that sends email or SMS, any endpoint that performs an expensive operation — all need rate limits. The model does not add these unless the prompt specifically asked for them. Review every endpoint that could be abused and confirm the rate limiting is present.

Information leakage in responses. Different HTTP status codes or response shapes for "user exists" versus "user does not exist" on login or password reset endpoints. Verbose error messages that reveal validation logic, allowing attackers to refine their payloads. Debug mode left on in production code paths.

- Injection: Every query parameterized. Every shell call arg-array or shell-free.

- Path traversal: Every user path joined with a base is normalized and prefix-checked.

- Template rendering: No raw-rendering primitives on user data.

- Prompt injection: User strings into LLM prompts are delimited and treated as untrusted.

- Regex: No catastrophic backtracking. Parsers preferred over regex where available.

- Auth flow: Login / reset / MFA delegated to a library or scrutinized line-by-line.

- AuthZ checks: Every handler verifies the session identity against the target resource.

- IDOR: Every `findById(user_controlled_id)` followed by an ownership check.

- JWT: Signature verified, algorithm pinned, audience and issuer checked, expiry enforced.

- Sessions: Rotated after login, secure+httponly in prod, explicit termination on logout.

- Crypto primitives: No MD5, SHA-1, DES, 3DES, RC4, ECB, unpadded RSA.

- Password hashing: bcrypt, scrypt, argon2, or PBKDF2 — never raw SHA-*.

- CSPRNG: Crypto-grade random for tokens, nonces, session IDs.

- Secrets: No hardcoded keys. No keys in logs.

- TLS: No `verify=False` or cert-bypass flags in production paths.

- Dependencies: Every new package exists, is the intended package, and has no known CVEs.

- Version pinning: Lockfile present; exact pins on high-risk direct deps.

- Transitive imports: No direct imports from transitive packages.

- Exception handlers: Neither swallow silently nor leak exception details to clients.

- Logs: No passwords, tokens, full user objects, or session IDs in log output.

- Fail-closed authz: Unexpected conditions deny, not allow.

- Rate limiting: Auth, password reset, email/SMS, expensive endpoints protected.

- Info disclosure: Uniform responses for user-exists vs not-exists.

- Hallucinated APIs: Every imported function/flag exists in the actual library version.

- Comment vs reality: Every `// validate here` or `sanitizedInput` actually validates or sanitizes.

Running This Checklist in PR Review Practice

A 25-point checklist run manually on every pull request would add so much time to the review that it would not survive contact with an engineering org's weekly delivery cadence. The practical implementation has to combine automation (catching what CI can catch), reviewer focus (catching the patterns tools miss), and training (so every reviewer can do both).

What CI should catch before the PR is human-reviewed. SAST rules covering the injection families (SQL, command, path traversal, template), secret scanning, SCA for known CVEs in dependencies, a hallucinated-package check (comparing the manifest against known registries), regex complexity analysis, JWT misconfiguration patterns. Most of these rules exist in common SAST and linting toolchains — the work is to turn them on, tune the false positive rate to something tolerable, and gate merges on them. By the time a human reviewer sees the PR, the tool-catchable issues should already be flagged or fixed.

What the reviewer has to read for. The patterns that require human judgment — authorization checks tied to business logic (no tool knows what "ownership" means in your domain), fail-open error handling, subtle auth flow issues, information leakage, prompt injection in LLM-integrated code. These are the categories where the reviewer's attention has to be focused, and where training pays off. Every engineer should be able to read a route handler and ask "who should be allowed to call this and what does the code check?" without pausing to look up the question.

The per-PR ritual. Before approving a PR that contains AI-generated code, the reviewer does three passes. First pass: read the diff end-to-end to understand the intended change. Second pass: for every modified handler, route, query, or crypto call, walk the checklist categories that apply to that line. Third pass: check the CI output and confirm no security-relevant warnings were dismissed or suppressed. The second pass is where the checklist lives — it is the habit that training needs to build.

What an author of an AI-generated PR should do before opening it. Run the same checklist on their own changes first. Code security is a reviewer responsibility, but not only a reviewer responsibility — every author self-reviewing their diff before pushing catches a disproportionate share of the issues that would otherwise land on the reviewer's desk. Self-review against this checklist takes five to ten minutes for a typical PR and substantially reduces review-cycle time.

What This Means for Team-Level Code Security Training

A 25-point checklist is only useful if every engineer on the team can run it without looking it up. The question for engineering leaders is how to get the team to that level, quickly, as the volume of AI-generated code continues to climb. The answer — at a scale that holds up under a 2026 engineering workload — is a structured code security training program that builds the habit rather than a checklist posted on a wiki that nobody rereads after their first week.

The properties of such a program are specific. Generic annual security training videos do not produce the kind of fast-pattern-recognition reviewers need to catch AI-generated issues at PR velocity. A program that works has four properties that directly map to the failure modes the checklist addresses.

Language-specific content. A Node.js engineer reviewing Node.js code needs to recognize the Node.js-specific patterns — `req.params.id` straight into a Mongoose query, `Math.random()` for session tokens, `eval` on user input, `jsonwebtoken` used without explicit algorithm pinning. Each language has a distinct vocabulary of bad AI-generated patterns. A review training that teaches patterns in a different language is half-training at best.

Hands-on practice, not passive consumption. A developer who has never identified a subtle SQL injection in a code review will not reliably identify one in production no matter how many slides they have seen. The training needs a format where the engineer actively classifies vulnerable code, diffs, or reviews — producing judgments, not just watching. Interactive challenge-based platforms designed around the reviewer's mental workflow are the right shape for this. Passive video-based secure coding curricula do not transfer to the pattern-recognition task the checklist requires.

Aligned to the team's actual stack and actual AI tools. A training program that covers Java web frameworks for a team that ships Node.js microservices is a waste of the engineers' time. Similarly, a program that covers AI tools in the abstract when the team uses Copilot daily is missing the point. The program has to be calibrated to what the team actually ships.

Continuously refreshed as models evolve. Models' failure patterns shift over time. Older models had specific pathologies around regex; newer models have shifted to different failure modes. A code security training program that was calibrated in 2023 does not teach what to look for in 2026 AI output. Annual refresh is the minimum; quarterly updates are better for teams where AI output volume is highest.

Organizations that have invested in this kind of training through 2024 and 2025 are visibly ahead in the 2026 cycle. The engineers on those teams can read AI output fluently and flag issues in real time — a capability that compounds, because every PR they review teaches the authors to write cleaner diffs next time. Organizations that skipped the training and relied on "we will catch issues in code review" without equipping the reviewers to do so are discovering that the review bottleneck does not scale, and that the issues that slip through accumulate into incident debt that is expensive to work down.

Closing: Reviews Are a Training System, Not Just a Quality Gate

The framing that most reviews suffer from is "review is where we catch bugs". That framing works when code volume is linear in headcount and when the people writing the code are also the people holding the context for what the code is supposed to protect. Neither assumption holds in an AI-accelerated engineering org. Volume is super-linear. The code's immediate author — the LLM — holds no security context at all. The human in the loop is the reviewer, and the reviewer's competence is what ultimately determines whether the org ships safe code at the speed the AI tools make possible.

Reframing review as a training system changes the practice. Every catch in review is a teaching moment — for the PR author, for the rest of the team who sees the comment, for the future AI-generated diff that looks similar. Every near-miss is a signal about where the team's pattern-recognition is weakest and where the next training update should focus. Every checklist-guided PR review is a rep, and the reps compound. Teams that take this framing seriously end up with engineers who are fluent in reading AI output the way an experienced editor is fluent in reading raw draft text — catching the slips without conscious effort, because the pattern is familiar.

The 25-point checklist above is not the final word. The threat surface will keep shifting as models evolve, as new attack classes emerge, as frameworks change their defaults. What is durable is the habit of reviewing AI-generated code with a different lens than human-written code, and the discipline of investing in the team's code security training so that the lens is in every engineer's reflexive toolkit, not a specialization locked to a handful of security engineers. That investment is the difference, in 2026, between an engineering organization that captures the AI productivity gain safely and one that discovers in production — during an incident response — what the AI generated that nobody caught.

If you are the person responsible for your team's code security training posture heading into the rest of 2026, the action items are straightforward. Run this checklist against your last ten merged PRs and count how many issues it would have caught that were missed. That count is your baseline. From there, the work is to invest in the training, tooling, and review practice that brings the number to zero — and to keep it at zero as the model output landscape changes around you. SecureCodingHub was built around exactly this problem. The pattern library in our platform is how teams train the reflex the checklist depends on.