GitHub says Copilot makes developers fifty-five percent faster. Stanford says those same developers write measurably less secure code while feeling more confident about it. Both studies are right. That tension is the quiet crisis running through application security in 2026, and most engineering teams are handling it badly.

The AI Coding Boom in Numbers

Adoption of AI coding assistants is unlike anything the industry has seen. According to Stack Overflow's 2024 developer survey, more than three quarters of professional developers are already using these tools or actively planning to. GitHub has reported that Copilot users complete common programming tasks up to fifty-five percent faster in controlled studies. Inside many engineering organizations, thirty to fifty percent of new code now originates as an AI suggestion that a developer accepted with only light modification.

Those numbers are easy to get excited about. A team that ships features faster is a team that wins markets. Executives read the productivity headlines and assume the economics are settled.

The problem is that productivity is only one side of the ledger. Nobody puts the security numbers on the same slide.

What the Research Actually Shows

The first serious academic study on AI-generated code security came out of NYU in 2021. Researchers at the Center for Cybersecurity fed GitHub Copilot eighty-nine scenarios drawn from the MITRE CWE catalog, the industry's standard list of software weakness types. Roughly forty percent of the programs Copilot produced contained security vulnerabilities. Not subtle edge cases either. Classic issues like SQL injection, buffer overflows, and missing input validation.

You could argue the models have improved since then, and they have. But the pattern has held. Follow-up research from Snyk and other security vendors keeps finding the same story. A majority of developers report that their AI tools introduce vulnerabilities into their code, and the majority of those same teams still have no formal review process specific to AI-generated output. The models got smarter. The guardrails did not keep up.

The most unsettling result came from Stanford in 2023. In a controlled study titled "Do Users Write More Insecure Code with AI Assistants?", researchers asked developers to complete security-sensitive programming tasks with and without an AI assistant. The results were not close. Participants with access to the AI assistant wrote measurably less secure solutions. Worse, those same participants believed they had written more secure code than the control group. The assistant made them wrong and confident at the same time.

A separate report from GitClear analyzed four years of Git history across millions of lines of code in the Copilot era. Code churn roughly doubled. Duplicate code increased sharply. The ratio of refactored to newly added code dropped. The industry was generating more code, and that code was holding together less well.

Why AI Writes Insecure Code

This is not a story about one tool being bad. The reasons AI assistants generate insecure code are baked into how they work.

Large language models are trained on enormous bodies of public code. That code includes Stack Overflow answers written by beginners, tutorials that prioritize clarity over safety, legacy projects from before modern security practices existed, and countless examples where the author showed the simple version on purpose, without the validation, the authorization check, or the parameterized query. The model learned from all of it. When you ask it for a login function, the statistically most likely token sequence often resembles the dozens of tutorial examples that happened to be insecure.

There is a deeper issue underneath the training data. Language models pattern-match. They do not reason about trust boundaries. When a careful engineer writes a new API endpoint, they mentally ask who is allowed to call this, what happens if the input is hostile, and what does this function leak if it fails. An AI assistant has no persistent model of your application. It does not know which routes require authentication, which fields are sensitive, or which parts of the codebase already assume a certain invariant. It produces code that looks correct in isolation because its context window is the prompt and nothing more.

The prompt itself deserves part of the blame. Most developers never ask for secure code. They ask for "a function that handles file uploads" or "an endpoint that returns a user by id". The model delivers exactly that, with none of the security posture a senior engineer would add by reflex. The tool is not withholding safety. Nobody asked it for any.

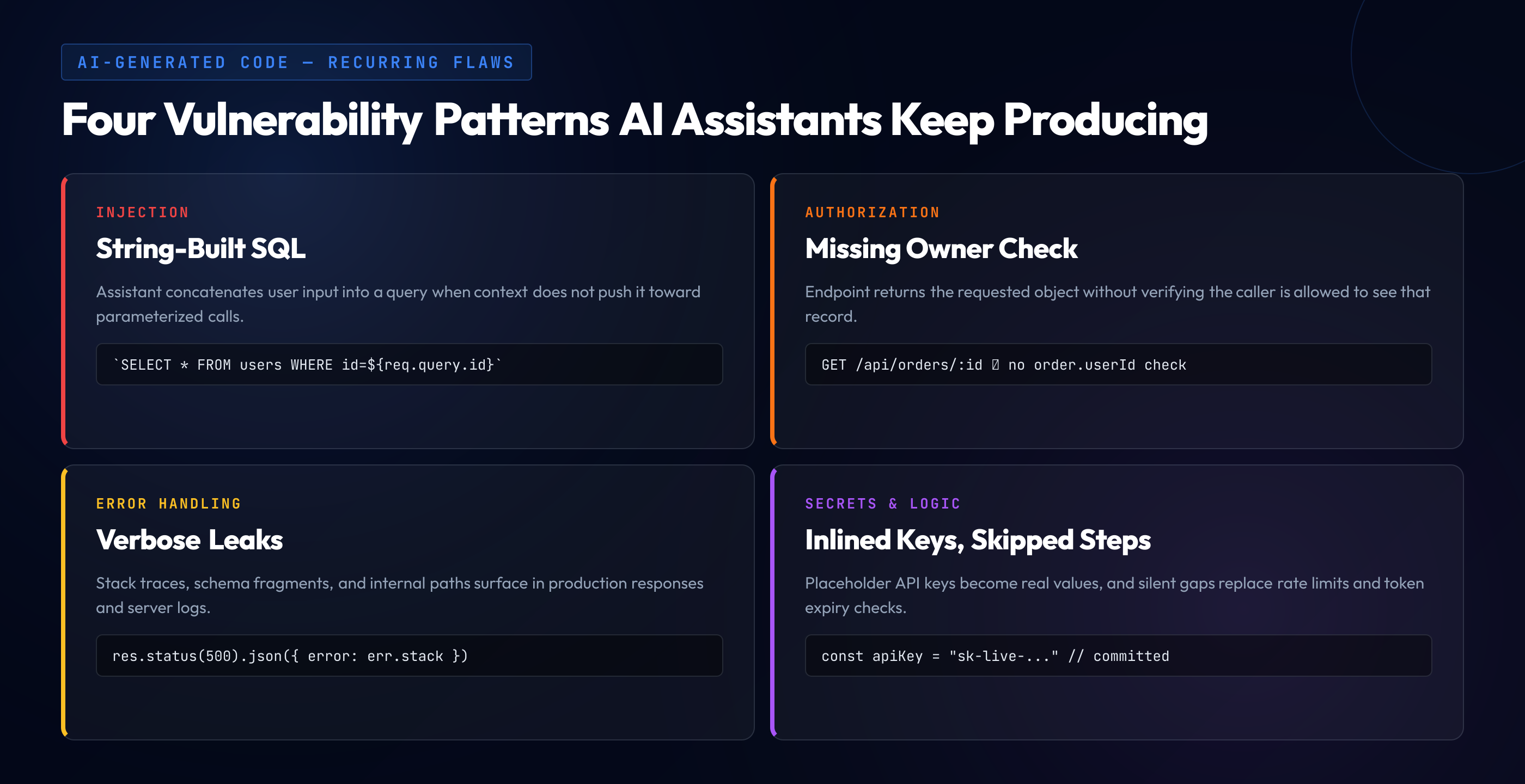

The Vulnerability Patterns That Keep Showing Up

After reviewing AI-generated code across enough teams, the same categories of issues appear again and again.

SQL injection through string interpolation is still the most common. Despite two decades of warnings and the ubiquity of parameterized queries, AI assistants will happily concatenate user input into a SQL string if the surrounding code does not strongly suggest otherwise. It is the simplest pattern in the training data.

Missing authorization checks come second. An assistant asked to add an endpoint that returns order details will return the order details. It will not ask whether the requesting user is allowed to see that particular order. The resulting code is a textbook IDOR vulnerability, and it will pass every functional test the developer writes.

Verbose error handling is a quieter problem. AI-generated code tends to log everything and return detailed errors to the caller, which is helpful in development and catastrophic in production. Stack traces end up in API responses. Database schemas leak through exception messages. Internal file paths show up in 500 errors.

Hard-coded secrets appear with surprising frequency. The model saw thousands of examples where the author put "your-api-key-here" inline because that is how tutorials work. When a developer pastes the real value over the placeholder and forgets to move it to a config file, the secret ends up in the repository. Git history does not forget.

And then there is the category that is hardest to catch in review. Logic flaws. An AI might correctly implement the happy path of a password reset flow and quietly skip the rate limiting, the token expiration check, and the email verification step. Everything compiles. Every test passes. The flow works in the browser. An attacker still takes over the account in ninety seconds.

Here is what "plausible but broken" looks like in practice:

// Prompt: "create an express endpoint that returns order details by id"

app.get('/api/orders/:id', async (req, res) => {

const order = await db.orders.findById(req.params.id)

if (!order) {

return res.status(404).json({ error: 'Order not found' })

}

res.json(order)

})

// What the prompt should have produced:

app.get('/api/orders/:id', requireAuth, async (req, res) => {

const order = await db.orders.findById(req.params.id)

if (!order) return res.status(404).json({ error: 'Not found' })

if (order.userId !== req.user.id) {

return res.status(403).json({ error: 'Forbidden' })

}

res.json(order)

})The first version compiles. The tests the developer wrote will pass. The endpoint does exactly what the prompt described. Any authenticated user on the platform can now fetch any other user's orders by incrementing an id in the URL. The AI did not fail. The prompt did.

The False Confidence Trap

The Stanford finding deserves its own spotlight. Developers using AI assistants rated the security of their own code higher than developers working without one, even though the AI-assisted code was measurably worse.

This is not a small psychological quirk. It explains why AI-generated code keeps slipping past review. When a tool makes a developer feel competent, their instinct to double-check weakens. When every pull request a team reviews came out of the same assistant, the review itself starts to feel ceremonial. Reviewers glance at diffs that look familiar, recognize the shape, and approve. Nobody wants to be the person who slows down a fifty-five percent productivity gain.

"Confidence without competence is how incidents happen. The attacker doesn't care how certain the developer was that the code was safe."

Why Secure Coding Skills Matter More, Not Less

The common reaction to all of this is to frame AI as a threat to developer jobs or to secure coding education. Both framings miss what is actually happening.

The developer's job is shifting. Less time spent writing code from scratch, more time spent reading, evaluating, and deciding whether to accept code a machine produced. In every other creative discipline we already know what this shift demands. A copy editor needs to understand grammar more deeply than a writer. A film editor sees structural problems a director working inside the scene cannot. The reviewer's job is harder than the producer's, because the reviewer has to recognize what is wrong without the context of having made it.

Spotting a subtle authorization flaw in fifty lines of AI-generated code requires knowing exactly what a correct authorization flow looks like. Recognizing that a password reset endpoint is missing rate limiting requires understanding why rate limiting matters on password resets specifically. Catching a silent SSRF because the AI confidently used a URL from user input requires having learned what SSRF is in the first place. These are skills, and they take deliberate practice to build.

There is a second reason the skill gap is widening rather than closing. You cannot prompt for what you do not know to ask for. A developer who has never internalized the concept of server-side request forgery will never think to add "and make sure this cannot be abused to fetch internal metadata endpoints" to their prompt. The prompt is the new attack surface, and it is only as secure as the person writing it. When that surface extends to connected tools via the Model Context Protocol, the threat model expands further — covered in depth in our MCP security guide.

What Teams Should Actually Do

Banning AI tools is not the answer. The productivity gains are real, your competitors are not going to stop using them, and your own developers will find ways around the policy. The answer is to adjust the process around the tools so their weaknesses stop shipping to production.

- Treat AI output like untrusted input. A suggestion from Copilot or Claude deserves the same scrutiny as a pull request from an unknown contributor. You would not merge that PR without reading every line. Do not merge the AI's either.

- Train developers on the specific failure modes these tools produce. Generic secure coding training does not prepare a reviewer for the particular patterns that assistants generate most often. Your team needs hands-on practice catching AI-generated authorization gaps, AI-generated SQL interpolation, and AI-generated logic flaws, because those are the bugs they will actually see in pull requests this week.

- Strengthen code review instead of loosening it. The temptation with AI tools is to review faster because the code volume grew. That is backwards. More volume at lower quality per line means reviews should get slower and more targeted, not quicker. Use SAST as a first gate, then insist on human review for anything touching authentication, authorization, data access, or external input.

- Build an internal security prompt library. Give your developers copy-paste prompts that explicitly ask the model for security-conscious code. "Write a function that does X, with parameterized queries, explicit authorization checks, input validation, and safe error handling" produces noticeably different output than "write a function that does X". A shared library of these prompts turns tribal knowledge into a standard.

- Route AI-heavy pull requests through a security champion. If a PR contains large blocks of obviously generated code, it deserves a second look from someone who specializes in security review. That is not bureaucracy. That is how you stop productivity gains from turning into incident reports.

The Real Bet

The organizations that come out of the AI coding era in good shape are not the ones that let the tools write everything. They are the ones whose developers still understand security well enough to catch what the tools miss.

AI is a multiplier. Whether it multiplies your shipping velocity or your vulnerability count depends on the human reviewing the diff, and that human needs to be sharper than ever. Treating these tools as a reason to invest less in secure coding education is the exact wrong lesson to draw from the last three years of research. The evidence points the other way. As code generation gets cheaper, the ability to tell good code from bad becomes the scarcest skill on the team. Invest accordingly.