Developers are trained to build things that work. They write code that handles the expected inputs, follows the happy path, and satisfies acceptance criteria. But attackers don't follow the happy path. They look for the seams, the assumptions, the places where "this should never happen" meets reality. Teaching developers to think offensively — to understand how their own code can be exploited — is the most effective way to produce genuinely secure software.

The Defender's Disadvantage

There is a fundamental asymmetry in application security. Defenders must protect every input, every endpoint, every state transition, every trust boundary in the entire application. An attacker only needs to find one weakness. One overlooked query parameter. One missing authorization check. One deserialization endpoint that trusts user-supplied data. As AI assistants add new attack surfaces, the asymmetry widens — our MCP security guide walks the model-tool threat model in detail.

Developers who only think defensively tend to protect against the attacks they already know about. They add input validation where the framework tells them to. They follow the checklist. But checklists are backward-looking — they codify yesterday's vulnerabilities, not tomorrow's. A developer who has never thought about how an attacker chains together small weaknesses into a full compromise will inevitably leave gaps that no checklist can cover.

What "Think Like an Attacker" Actually Means

Let's be clear about what this phrase does not mean. It doesn't mean turning developers into penetration testers or asking them to run exploit frameworks against production systems. It means developing a specific analytical habit: the ability to look at code and ask "how could this be abused?"

In practice, thinking like an attacker means understanding four things:

- Trust boundaries and where they break down — Every application has points where trusted data meets untrusted data. The boundary between client and server, between your service and a third-party API, between the database and user-supplied queries. Attackers look for places where developers assumed the data on one side of the boundary was safe.

- How user input flows through the system — A form field doesn't just go into a database. It passes through routers, middleware, validation layers, business logic, template engines, and response serializers. An attacker traces that full path, looking for any point where the input is interpreted rather than treated as data.

- What assumptions the code makes — Every line of code embeds assumptions. "This ID will always be numeric." "This header will always come from our load balancer." "This file path will always be within the uploads directory." Attackers systematically identify and violate these assumptions.

- Where the happy path diverges from the attack path — The happy path is the flow your tests cover. The attack path is what happens when you send a negative quantity, a null byte in a filename, a JSON payload where the server expects XML, or a request that arrives after the session expired but before the token was invalidated.

The Attack-First Learning Model

Traditional security training presents vulnerabilities as abstract concepts. "SQL injection is when an attacker inserts malicious SQL into a query." Developers nod, check the box, and go back to writing the same code they wrote before. The information doesn't stick because it was never felt.

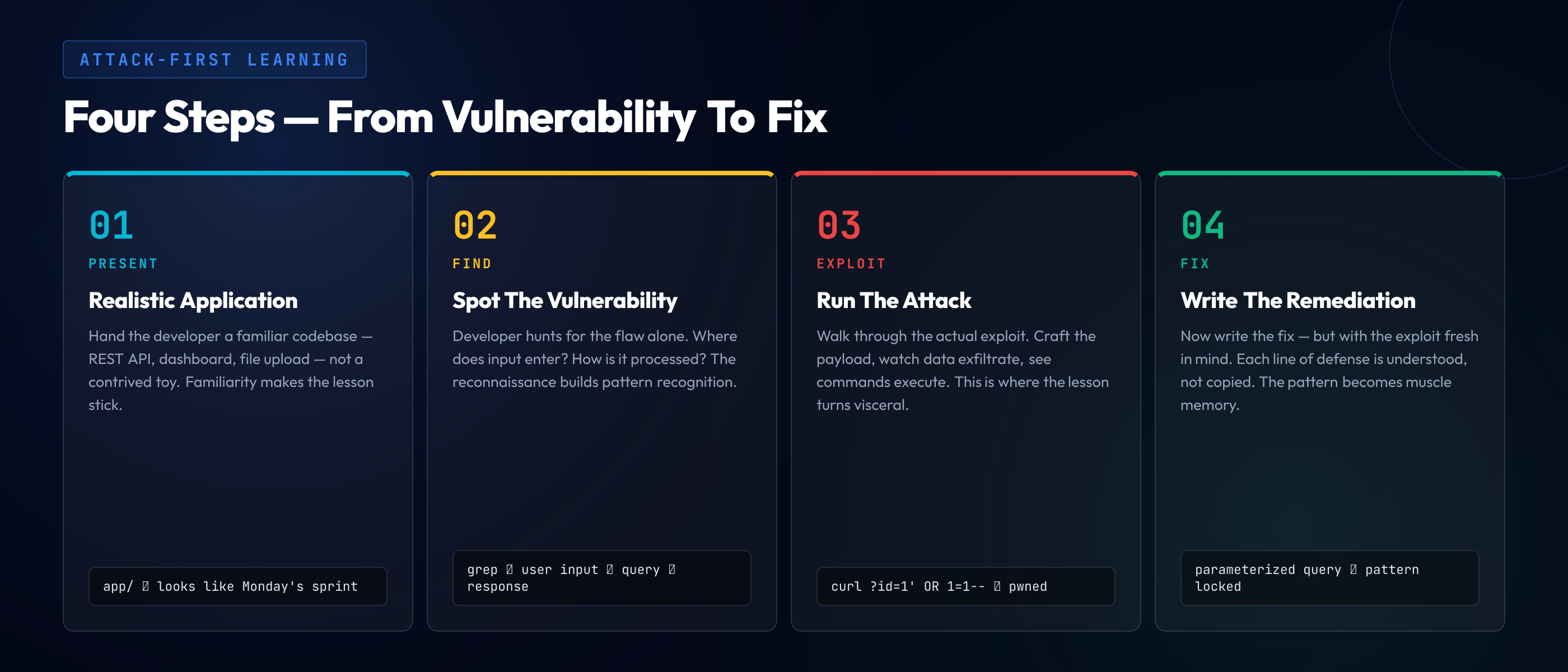

The attack-first learning model flips the sequence. Instead of explaining the vulnerability first and showing the fix second, it puts the developer in the attacker's seat:

Step 1: Present a realistic application

Show developers a functioning application — not a contrived toy example, but something that looks like the code they write every day. A REST API with authentication, a dashboard with search functionality, a file upload endpoint. The more familiar it looks, the more impactful the lesson.

Step 2: Let them find the vulnerability

Give developers time to examine the code and identify the security flaw. This reconnaissance phase builds the most critical skill: pattern recognition. Where is user input entering the system? How is it being processed? What's missing? The act of searching for the vulnerability is more educational than being told where it is.

Step 3: Guide them through exploitation

Once the vulnerability is identified, walk through what an attacker would actually do with it. Craft the malicious payload. See the data that gets exfiltrated. Watch the server execute unintended commands. This step creates emotional impact — the moment a developer sees their own pattern of code being exploited is the moment the lesson becomes permanent.

Step 4: Have them write the fix

Now the developer writes the remediation. But because they've just experienced the exploitation, they understand why each element of the fix matters. They're not just adding a parameterized query because the linter told them to — they're adding it because they watched raw SQL get injected through that exact input field.

"Tell me and I forget. Show me and I remember. Involve me and I understand." The attack-first model involves the developer at every step — and the understanding it creates outlasts any slide deck or compliance video.

From Theory to Muscle Memory

Understanding a vulnerability once is not enough. Security awareness decays quickly if it isn't reinforced. The goal is to move pattern recognition from conscious analysis to instinct — from "let me check the OWASP list" to "that input isn't sanitized" at first glance.

This requires repetition across variations. After exploiting five different SQL injection variants — string-based, numeric, blind boolean, time-based, second-order — a developer doesn't need a checklist to spot the pattern. They see unsanitized input flowing into a query and the alarm fires automatically. The same principle applies to XSS, path traversal, SSRF, and every other vulnerability class.

Consider this server-side request forgery (SSRF) vulnerability in a URL preview feature:

// Vulnerable: user-supplied URL fetched without validation

app.get('/api/preview', async (req, res) => {

const url = req.query.url;

const response = await fetch(url);

const html = await response.text();

res.json({ preview: html });

});

// Attacker sends: /api/preview?url=http://169.254.169.254/latest/meta-data/

// Result: cloud instance metadata (credentials) leaked

// Secure: validate URL against allowlist and block internal ranges

app.get('/api/preview', async (req, res) => {

const url = req.query.url;

if (!isAllowedUrl(url)) return res.status(403).json({ error: 'Blocked' });

const parsed = new URL(url);

if (isInternalIP(parsed.hostname)) return res.status(403).json({ error: 'Blocked' });

const response = await fetch(url);

const html = await response.text();

res.json({ preview: html });

});A developer who has exploited SSRF — who has personally fetched cloud metadata through a vulnerable endpoint — will never look at an unvalidated fetch(url) call the same way again. The pattern becomes instinctive: user-controlled URL plus server-side fetch equals SSRF risk.

Guided Scenarios vs. CTF Challenges

Capture-the-flag competitions have long been the go-to format for security education, and for good reason. They're engaging, competitive, and satisfying. For a ground-up walkthrough of event formats, categories, beginner platforms, and how to run an internal CTF as part of a broader training program, see our CTF guide for developers. But CTFs have limitations as a training tool for working developers.

CTF challenges are often deliberately obscure. They reward creative lateral thinking and deep technical knowledge, which makes them excellent for training security specialists. But for a backend developer who needs to write secure API endpoints, a CTF challenge involving steganography or binary exploitation isn't directly applicable to their daily work.

Guided scenarios take a different approach. They provide context — a realistic application, a believable threat model, a narrative that mirrors real-world incidents. They offer progressive difficulty, starting with obvious vulnerabilities and advancing to subtle, multi-step exploitation chains. And they provide immediate, structured feedback that connects the vulnerability to the specific code pattern that caused it.

- CTFs excel at — building deep security expertise, fostering community, identifying security talent, and making learning fun through competition.

- Guided scenarios excel at — teaching specific vulnerability patterns relevant to a developer's tech stack, building consistent baseline knowledge across a team, and providing measurable skill progression.

- The best programs use both — guided scenarios for structured onboarding and baseline skills, CTFs for engagement and advanced challenges. They serve different purposes and complement each other.

Building the Attacker Mindset Into Daily Work

Training sessions end, but the attacker mindset should persist. The real measure of success isn't how well a developer performs in a training exercise — it's whether their daily habits change. There are concrete ways to embed offensive thinking into everyday development workflows.

Code review with attacker eyes. Before approving a pull request, spend two minutes asking: "If I were an attacker, what would I do with this endpoint? What happens if this input is malicious? What assumption is this code making that an attacker would violate?" This single habit catches more vulnerabilities than any automated scanner.

Threat modeling as a team ritual. When designing a new feature, dedicate fifteen minutes to mapping out attack surfaces. What new inputs are we accepting? What new data are we exposing? What happens if this third-party integration is compromised? These conversations surface risks before code is ever written.

"What would an attacker do?" as a standard question. Make this part of your team's vocabulary. In design reviews, sprint planning, and incident retrospectives, ask the question explicitly. Over time, it becomes automatic — and that's when the attacker mindset has truly taken hold.

The most secure organizations aren't the ones with the largest security teams. They're the ones where every developer carries a small piece of the attacker mindset with them — where the question "how could this be abused?" is as natural as "does this compile?" Security stops being someone else's job and becomes a shared instinct, woven into every line of code and every review comment.