Every year, millions of developers sit through mandatory security training. They watch videos, click through slides, pass a quiz, and promptly forget everything. Meanwhile, the same vulnerability classes keep appearing in production code. The problem isn't that developers don't care about security — it's that the way we train them is fundamentally broken.

Organizations spend billions of dollars annually on security awareness and training programs. Yet vulnerability counts continue to climb, and the same categories that dominated the OWASP Top 10 a decade ago — injection, broken access control, cross-site scripting — still dominate today. If training worked, we'd expect to see those numbers decline. The fact that they haven't should tell us something.

The Compliance Trap

Most security training exists because a regulation requires it. PCI DSS (whose new 4.0.1 Requirement 6.2.2 sets a specific bar for secure coding training), SOC 2, HIPAA, ISO 27001 — they all mandate some form of security awareness training. So organizations check the box. They purchase a platform, assign an annual course, track completion rates, and present the numbers during audit season.

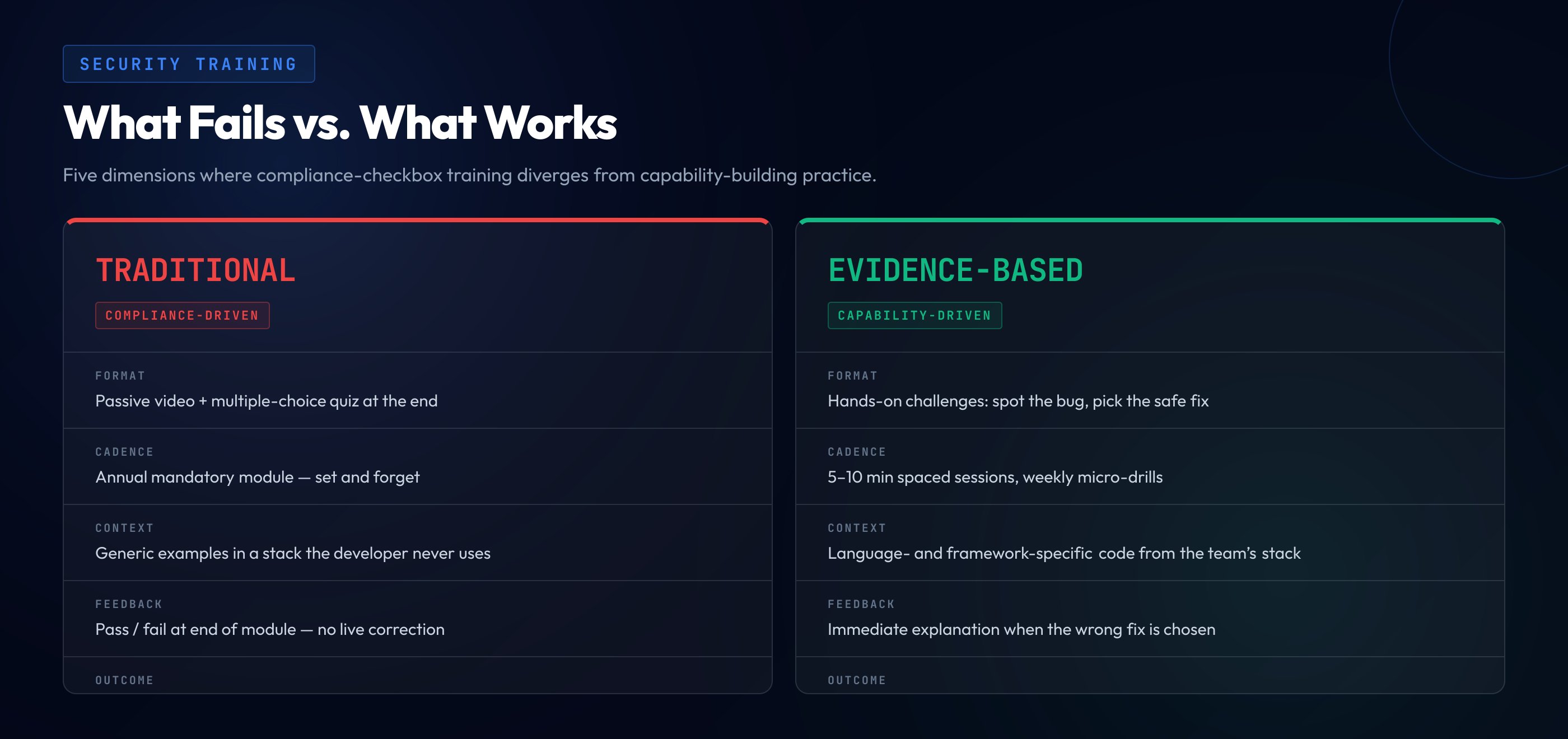

The problem is that compliance and competence are not the same thing. A 100% completion rate on a training module tells you that every developer clicked through to the end. It tells you nothing about whether they can identify a Server-Side Request Forgery vulnerability in a pull request, or whether they understand why parameterized queries prevent SQL injection.

When training is driven by compliance rather than capability, it optimizes for the wrong goal. The content becomes generic enough to satisfy auditors rather than specific enough to change behavior. The result is a program that satisfies regulators but fails developers.

Why Passive Learning Doesn't Work

Cognitive science has known for decades that passive consumption is the least effective form of learning. Hermann Ebbinghaus's research on the "forgetting curve" showed that people forget roughly 70% of new information within 24 hours and up to 80% within a week — unless they actively reinforce it through practice.

Traditional security training is almost entirely passive. Developers watch a video explaining cross-site scripting. They see a diagram of how an attacker injects a script tag. They might answer a multiple-choice question. Then they go back to writing code, and within days, the details have faded.

"Tell me and I forget. Teach me and I remember. Involve me and I learn." This principle, often attributed to Benjamin Franklin, is backed by modern learning science. Active recall and hands-on practice produce retention rates 3-5x higher than passive instruction.

The problem isn't just forgetting — it's that passive learning never creates the neural pathways needed for pattern recognition. A developer who has only read about XSS cannot reliably spot XSS in real code. Recognition requires practice, repetition, and feedback.

The Context Problem

Even when training content is technically accurate, it often fails because it doesn't match the developer's actual working environment. A Java backend developer watching a PHP demonstration of SQL injection isn't learning how to prevent SQL injection in their stack — they're learning how SQL injection works in a stack they'll never use.

This context mismatch creates a translation burden. The developer has to mentally convert the example from the training language to their working language, from the training framework to their framework, from the training architecture to their architecture. Most don't bother. They tune out, complete the module, and move on.

Effective training meets developers where they are:

- Language-specific examples — A Python developer should see vulnerabilities in Django or Flask, not in Express.js or Spring Boot.

- Framework-aware scenarios — Understanding how your ORM handles queries matters more than generic SQL syntax demonstrations.

- Role-relevant content — A frontend developer needs to understand DOM-based XSS and client-side storage risks, not server-side deserialization attacks.

- Realistic code patterns — Training examples should look like production code, not textbook snippets from 2010.

What Actually Works

Research from organizations like SANS, NIST, and BSIMM consistently points to the same set of evidence-based approaches that produce measurable improvements in code security. None of them involve watching a 45-minute video once a year.

Hands-on practice with real code

Developers learn security by doing security. Identifying a vulnerability in a realistic code snippet, understanding why it's dangerous, and selecting the correct fix creates durable knowledge. This is the same principle behind flight simulators and medical residencies — practice in a safe environment before the stakes are real.

Consider this example. A developer encounters this code in a training exercise:

// Vulnerable: user input rendered without encoding

const name = req.query.name;

document.getElementById('greeting').innerHTML = `Welcome, ${name}`;

// Secure: use textContent to prevent script execution

const name = req.query.name;

document.getElementById('greeting').textContent = `Welcome, ${name}`;When a developer has personally identified the innerHTML sink, understood why it allows script injection, and selected textContent as the safe alternative, they're far more likely to catch this pattern during code review than someone who read about XSS in a slide deck.

Short, frequent sessions over marathon workshops

Spaced repetition beats cramming. A 10-minute challenge three times a week produces better retention than a four-hour workshop once a quarter. Frequency builds habits; duration just builds fatigue.

Immediate feedback loops

When a developer selects the wrong fix, they need to understand why it's wrong — immediately, not at the end of a module. Instant feedback closes the gap between mistake and understanding, reinforcing correct patterns while the context is still fresh.

Measurable outcomes tied to real data

The ultimate measure of training effectiveness isn't quiz scores or completion rates — it's whether vulnerability counts go down. Organizations that tie training outcomes to actual vulnerability data from their codebase can identify which topics need more focus and which teams need additional support.

The Shift from Annual to Continuous

The most fundamental change organizations need to make is treating security training as a continuous process, not a calendar event. Security threats evolve constantly. New vulnerability classes emerge. Frameworks release patches that change security best practices. An annual training module is outdated before it's even assigned.

Continuous security training means embedding learning into the developer workflow:

- Weekly micro-challenges — Short, focused exercises that take 5-10 minutes and cover one vulnerability pattern.

- Just-in-time learning — Training triggered by real events, like a security finding in a code review or a new CVE affecting the team's stack.

- Progressive difficulty — Start with common vulnerability patterns and advance to complex, multi-step attack chains as competence grows.

- Team-level visibility — Managers and security leads can see which topics their teams have mastered and where gaps remain, allowing targeted reinforcement.

Security training doesn't fail because developers are unwilling to learn. It fails because organizations default to the easiest, cheapest, most auditor-friendly approach — and then wonder why the same vulnerabilities keep shipping. The evidence for what works is clear: hands-on, language-specific, continuous, and measurable. The organizations that adopt this model don't just produce better audit reports — they produce more secure software.