The EU Cyber Resilience Act — Regulation (EU) 2024/2847 — is the first law anywhere on earth that turns secure development into a legal obligation for every company selling digital products into the European single market. It entered into force on 10 December 2024. Its mandatory vulnerability reporting obligations become live on 11 September 2026. Full enforcement — the obligations that change what your engineers actually do every day — arrives on 11 December 2027. This guide walks what the regulation actually requires of developers and engineering organizations, what Annex I calls out by name, how the September 2026 and December 2027 deadlines differ, what documentation regulators expect you to hold for ten years, how the open-source carve-outs work, and what a credible readiness posture looks like in the roughly eighteen months between now and full application.

Why September 2026 Is The First Real Deadline

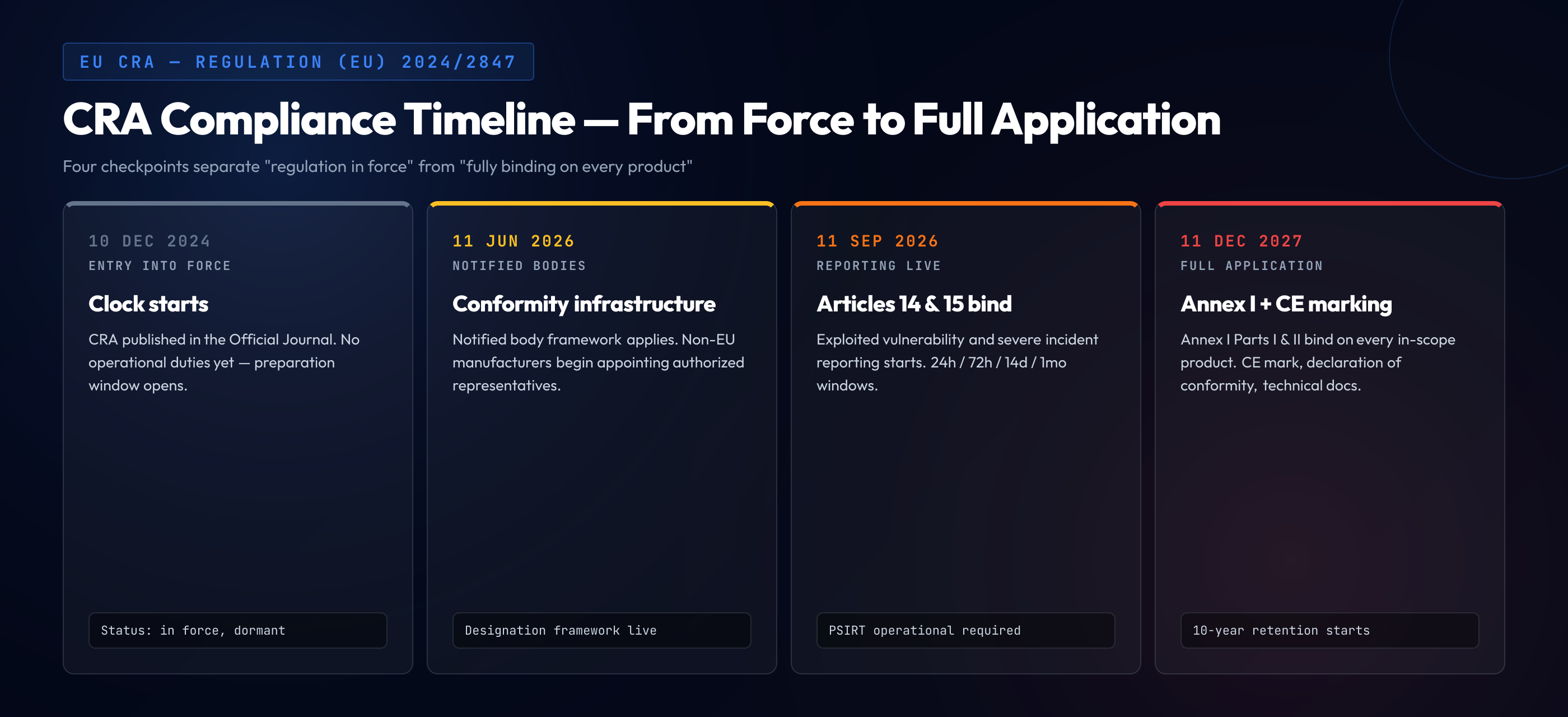

The Cyber Resilience Act has a staggered timeline on purpose. The political deal was closed in late 2024 and the legal text was published in the Official Journal of the European Union on 20 November 2024. The regulation's official entry into force was 10 December 2024, but almost nothing operational happened on that date — the CRA's drafters understood that hardware and software vendors needed time to reorganize engineering, compliance, and legal operations before the rules actually bit. So the text sets two forward-dated application points. 11 September 2026 is when Chapter IV's reporting obligations — Articles 14 and 15 — become binding. 11 December 2027 is when the rest of the regulation, including the Annex I baseline cybersecurity requirements and the conformity assessment machinery, applies in full.

Most engineering leaders read "full application in December 2027" and conclude that 2027 is the deadline they need to plan around. That reading misses the substance of what September 2026 changes. From September 2026 onward, any manufacturer of a product with digital elements placed on the EU market has a legally binding obligation to report exploited vulnerabilities and severe incidents to ENISA and the relevant national CSIRTs within specific time windows — 24 hours for an early warning notification, 72 hours for an incident notification with details, and one month for a final report. The one month timeline for a vulnerability resolution update is similarly binding. Those obligations apply to every product already on the market, not just products shipped after the date. Organizations discovering in October 2026 that they have no internal process to detect, classify, and report exploited vulnerabilities within the Chapter IV windows are already in breach of the regulation, regardless of whether their conformity assessments for Annex I are in place yet.

September 2026 is the date that forces most companies to have at least a minimum viable internal vulnerability handling function — the intake, triage, communication, and regulatory reporting pipeline — operational and tested. December 2027 is when the rest of the engineering organization has to show that the products it ships were developed against the Annex I baseline. Companies that assume they can wait until mid-2027 to begin preparing will discover in late 2026 that the reporting obligations expose gaps in their detection and classification capabilities that take months, not weeks, to fill.

What the Cyber Resilience Act Actually Is

The Cyber Resilience Act is a horizontal regulation. That means its scope is defined by the type of product, not by the sector the product is sold into. Anything that meets the definition of "product with digital elements" — hardware or software placed on the market whose intended use includes data connection to a device or network — falls inside the regulation unless it is explicitly excluded. The exclusions are narrow and sector-specific: medical devices already regulated under EU 2017/745, motor vehicles under EU 2019/2144, civil aviation products under EU 2018/1139, and marine equipment under EU 2014/90. A few categories of open-source software are excluded too, and we cover that below. Everything else — the laptop, the router, the IoT sensor, the industrial controller, the SaaS that runs in a customer's browser, the mobile app, the desktop application, the firmware, the library, the framework, the operating system — is inside the CRA.

The horizontal scope is a feature, not an accident. The Commission's analysis of the pre-CRA European cybersecurity posture found that the patchwork of sector-specific rules left most connected products with no mandatory security baseline at all. The CRA fixes that by imposing one baseline on everything. The trade-off is that the baseline has to be written at a level of generality that applies equally to a microcontroller firmware, a cloud-hosted web application, and a desktop office suite. Annex I does exactly that — it expresses requirements in outcome terms ("the product shall be designed, developed, and produced in such a way that it ensures an appropriate level of cybersecurity based on the risks") and leaves manufacturers to interpret those outcomes for their specific product class, subject to risk-based justification and — for important and critical products — third-party conformity assessment.

Two structural points shape how the rest of the regulation reads. First, the CRA operates on the CE marking model familiar from other EU product regulations. To sell a digital product in the EU, the manufacturer must perform a conformity assessment and affix the CE mark. For most products, self-assessment is permitted. For "important products" (Annex III class I and II) and "critical products" (Annex IV), the assessment route is more rigorous — internal production control plus EU-type examination for Annex III class II, and mandatory European cybersecurity certification for Annex IV. This means that products like password managers, identity management systems, VPNs, network management tools, and certain categories of industrial control gear face a meaningfully higher compliance bar than a typical business SaaS.

Second, the CRA creates obligations for manufacturers, importers, and distributors separately, which matters for software supply chains. A framework maintainer is a manufacturer when they place the framework on the market. A company shipping a commercial product that embeds that framework is a manufacturer of the embedded product. A reseller in an EU country is a distributor. Each role has distinct obligations, and the manufacturer role carries the bulk of the regulation — it is the manufacturer whose engineering organization has to implement Annex I, handle vulnerabilities under Annex II, produce the technical documentation, and manage the conformity assessment and declaration of conformity.

Annex I Part I: The Baseline Cybersecurity Requirements

Annex I is where the CRA stops being abstract and starts telling developers what to do. The annex is split into two parts. Part I lists cybersecurity requirements that the product itself must satisfy. Part II lists vulnerability handling requirements that the manufacturer's process must satisfy. The two parts are separate sets of obligations and are both mandatory.

Part I contains thirteen requirements. They are written in outcome language but they translate into a specific set of engineering decisions that must be visible in the product. The complete list — in the regulation's numbering — is worth walking through because every one will surface in conformity assessments and every one has to be answered in the technical documentation.

1.1 — Appropriate level of cybersecurity based on risks. The product, by design, must reach a level of cybersecurity proportionate to its intended use and risk profile. This is the umbrella clause that the remaining twelve implement. The documentation must include the risk assessment that justifies the design choices.

1.2 — No known exploitable vulnerabilities. Products are placed on the market without known exploitable vulnerabilities. This forces shipment gates — a product released with an open critical CVE in one of its dependencies is in breach from the moment it ships. SCA tooling and dependency hygiene move from nice-to-have to shipment blockers under this requirement.

1.3 — Secure by default configuration. Default configurations must be secure. Products that ship with default admin passwords, publicly reachable administrative interfaces, or broad default network permissions fail this requirement on day one. The documentation must list the default configuration and justify it.

1.4 — Authentication, identity, and access management. Products must protect against unauthorized access by appropriate authentication and access controls. For products that handle credentials, the requirement extends to implementing state-of-the-art credential handling and authentication protocols. "Sign in with a four-digit PIN" is unlikely to pass on a product that could reasonably use a stronger mechanism.

1.5 — Confidentiality protection. Data at rest and in transit must be protected by state-of-the-art cryptographic mechanisms. Products using deprecated cryptography (MD5, SHA-1 for integrity-sensitive purposes, unpadded RSA, plain HTTP where HTTPS is feasible) cannot meet this requirement. The documentation must identify the cryptographic primitives in use.

1.6 — Integrity protection. Stored and transmitted data and commands must be protected against unauthorized manipulation. In practice this means integrity-protected channels, signed updates, and tamper-evident logs where manipulation of the log would matter.

1.7 — Minimization of processed data. The product must process only data that is adequate, relevant, and limited to what is necessary for its intended use. This is a data-minimization requirement that overlaps with GDPR but exists in its own right under the CRA for non-personal data as well.

1.8 — Availability of essential and basic functions. The product must protect the availability of its essential and basic functions, including against denial-of-service conditions. This is a resilience requirement, not a high-availability one — it concerns graceful degradation, not four nines.

1.9 — Minimization of negative impact on other devices. The product must be designed so that it does not negatively affect the availability of services provided by other devices or networks. A product that by design generates excessive traffic, interferes with other devices on a shared network, or consumes shared resources beyond its specified need fails this clause.

1.10 — Attack surface reduction. Attack surfaces, including external interfaces, must be minimized. Every exposed port, API, administrative endpoint, debug interface, and legacy protocol that ships enabled is a liability under this requirement unless its presence is specifically justified.

1.11 — Exploitation impact reduction. Design and development techniques and mitigations must reduce the impact of incidents. This is the "defense in depth" clause — segmentation, privilege separation, sandboxing, memory-safety technologies, exploit mitigations at the build and runtime levels.

1.12 — Monitoring and logging. Security-relevant information and internal activity must be recorded and monitored, through user-friendly mechanisms where applicable, including the possibility for users to opt out of data collection. Products that cannot be observed cannot be defended, and the regulation treats that as a design defect.

1.13 — Secure update mechanism. The product must provide the possibility for users to securely and easily remove their data on request, and for vulnerability fixes to be distributed via security updates. The update mechanism itself must be secure — automatic where possible, authenticated, integrity-protected, and auditable. "We do not ship security updates for this product line" is not a compliant posture.

Reading the thirteen as a set, a pattern emerges. Annex I Part I is, in effect, a codification of the secure-by-design and secure-by-default principles that the US CISA, the UK NCSC, and ENISA have been publishing joint guidance on since 2023. Nothing in the list is a surprise to anyone who has been running a mature secure development program. The shift is that in the EU after December 2027, every one of the thirteen is a legal obligation, not a best practice — and the organization's answer to each one must be written down in technical documentation that regulators can request and audit.

Annex I Part II: Vulnerability Handling Requirements

Part II addresses the manufacturer's process, not the product. It has eight requirements. Where Part I says "the product shall", Part II says "the manufacturer shall". Both sets have to be documented; both are in scope of the conformity assessment.

2.1 — Identify and document vulnerabilities. Identify and document vulnerabilities and components in the product, including by drawing up a software bill of materials (SBOM) in a commonly used and machine-readable format. SBOMs are explicitly required under the CRA. The SBOM must be maintained, not just generated once at shipment. Formats like SPDX and CycloneDX are the de facto accepted options.

2.2 — Address and remediate vulnerabilities without delay. Vulnerabilities must be addressed and remediated without delay by providing security updates that are separated from functional updates where feasible. The "without delay" clause does not give a specific timeline, but the Part II process has to tie into the Chapter IV reporting obligations, which do have specific windows.

2.3 — Regular security testing and reviews. Effective and regular tests and reviews of the security of the product. This is where SAST, DAST, SCA, fuzzing, penetration testing, and code review earn their explicit mention. A manufacturer that cannot show a repeatable testing cadence with evidence of findings and remediation is not meeting this requirement.

2.4 — Publicly disclose information about fixed vulnerabilities. Once a security update is available, the manufacturer must publicly disclose information about the fixed vulnerabilities, including a description, information allowing users to identify the product, the impacts, the severity, and clear information helping users to remediate. Advisory formats like CSAF are the emerging standard.

2.5 — Coordinated vulnerability disclosure policy. The manufacturer must have a policy on coordinated vulnerability disclosure, published publicly. This is a full CVD policy — intake mechanism, triage process, researcher engagement, embargo terms.

2.6 — Enable information sharing on vulnerabilities. Take measures to facilitate the sharing of information about potential vulnerabilities, including by providing a contact address for reporting vulnerabilities discovered in the product. A security.txt file, a dedicated PSIRT address, or a bug bounty intake is the implementation pattern.

2.7 — Secure distribution of updates. Updates must be distributed to users without delay, free of charge, and with advisory messages providing the relevant information to help users install them.

2.8 — Patches without delay after vulnerability identification. Ensure that, once a security update is available, it is disseminated without delay and, unless otherwise agreed between a manufacturer and a business user in relation to a tailor-made product, free of charge, accompanied by advisory messages providing users with the relevant information, including on potential action to be taken.

The Two Dates That Matter: 11 September 2026 and 11 December 2027

The CRA's staggered application timeline is the single most misread part of the regulation. Engineering leaders who hear "fully applicable December 2027" and plan accordingly are planning around the wrong date for their first wave of work. The structure is that Article 14 (reporting of actively exploited vulnerabilities) and Article 15 (reporting of severe incidents) apply from 11 September 2026. The rest of the regulation — the Annex I obligations, the conformity assessment, the CE marking, the technical documentation — applies from 11 December 2027. Certain earlier notification obligations on conformity assessment bodies and the framework around authorized representatives applied from 11 June 2026. The gap between September 2026 and December 2027 is fifteen months, and the work distribution across those fifteen months matters.

From 11 September 2026, every manufacturer of an in-scope product has to handle two reporting streams. The actively exploited vulnerability stream under Article 14 requires an early warning to ENISA and the designated CSIRT within 24 hours of the manufacturer becoming aware of exploitation, a vulnerability notification with corrective or mitigating measures within 72 hours, and a final report within 14 days after a corrective or mitigating measure is available. The severe incident stream under Article 15 requires an early warning within 24 hours of awareness, an incident notification within 72 hours, and a final report within one month of the incident notification. Both streams require ongoing communication throughout the incident lifecycle.

Operationalizing those streams is non-trivial. The engineering organization has to know within the first 24 hours that a vulnerability in a product it shipped is being actively exploited, or that a severe incident affecting the security of its product has occurred. That implies external vulnerability intelligence ingestion, a working PSIRT with after-hours coverage, a legal and communications workflow that is pre-approved to send regulatory notifications to foreign regulators without weeks of internal escalation, and technical documentation on every shipped product that makes identifying the affected units and the affected customers possible within hours. A manufacturer of fifty product lines with no internal security operations function cannot stand this capability up in a month.

From 11 December 2027, the rest of the regulation applies. Products placed on the EU market from that date must be accompanied by a CE mark, a declaration of conformity, and — if requested by market surveillance authorities — the technical documentation demonstrating Annex I compliance. Products already on the market before December 2027 that undergo a "substantial modification" after the date fall into scope as if newly placed. Products with no substantial modification after the date are not retroactively in scope for Annex I, but they remain in scope for the Chapter IV reporting obligations that started in September 2026 — there is no grandfather clause for the reporting stream.

- 10 December 2024. CRA entered into force. No operational obligations yet. Clock starts for internal preparation.

- 11 June 2026. Conformity assessment body notification framework applies. Designation of authorized representatives becomes relevant for non-EU manufacturers.

- 11 September 2026. Article 14 (exploited vulnerabilities) and Article 15 (severe incidents) reporting obligations apply. 24h / 72h / 14d and 24h / 72h / 1mo windows become legally binding.

- 11 December 2027. Full application. Annex I Parts I and II binding on all in-scope products placed on the market from this date. CE marking required. Technical documentation must exist.

- Ongoing after 11 December 2027. 10-year documentation retention obligation runs from the moment the product is placed on the market. Vulnerability handling obligations run for the product's support period (minimum five years unless shorter lifetime is justified).

What "Secure Development" Means Under the CRA

The phrase secure development appears in the CRA's recitals and is the organizing concept behind Annex I as a whole. Recital 55 and the operational clauses make clear that the regulation expects manufacturers to integrate cybersecurity considerations into every phase of the product lifecycle — planning, design, development, production, delivery, and maintenance. This is not a new idea in the security field, but the CRA is the first EU regulation to make it legally binding across every connected product category. Understanding what the regulation expects under that umbrella concept is the difference between building a compliant program and building a documentation theater program.

At the planning and design phase, the CRA expects a documented risk assessment. The manufacturer identifies the product's intended use, the threat environment, the protection targets, and the security objectives that the rest of the development process will work toward. This risk assessment becomes part of the technical documentation and is the justification for the specific interpretation of each Annex I Part I requirement. A product shipping in a constrained embedded form factor can justify a different cryptographic choice than a server-side service — but only if the risk assessment explains the reasoning.

At the development phase, secure development means applying secure coding techniques to the languages and frameworks in use, running security testing during the build process, performing code review with security criteria, and managing third-party dependencies with tooling that surfaces known vulnerabilities before shipment. This is the point in the lifecycle where the practical investment in developer capability matters most. The regulation does not prescribe a specific training program — the CRA is not a training regulation the way PCI DSS 6.2.2 is — but the Annex I Part I obligation to place a product on the market without known exploitable vulnerabilities and with attack surface minimized cannot be met unless the developers writing the code know how to achieve those outcomes in the languages they use. In practice this means application security training that is language-specific and paired with hands-on practice on the vulnerability classes most relevant to the product.

At the production phase, the regulation expects configuration management, supply chain integrity controls, and reproducible build practices appropriate to the product's risk class. For software, this is CI pipeline integrity, signed artifacts, provenance records (SLSA levels are the emerging reference), and SBOM generation as part of the release process.

At the delivery phase, the default configuration the product ships with has to be secure (Annex I Part I 1.3), the accompanying user documentation has to include the security-relevant information the regulation enumerates (Annex II), and the update mechanism has to be operable from the moment the product is installed.

At the maintenance phase, the manufacturer runs the Part II obligations — vulnerability handling, coordinated disclosure, security updates, public advisories — for the product's entire support period. The CRA sets a minimum expected support period of five years unless the manufacturer can justify a shorter lifetime based on the product's intended use. A product positioned as "long-lived industrial equipment" cannot justify a two-year support period.

This lifecycle view is what distinguishes a CRA-ready secure development program from a point-in-time compliance exercise. The regulation looks for evidence that security was considered at each phase, not just at a final audit. Manufacturers that treat cybersecurity as a gate to pass before shipment will find the technical documentation requirement exposes the absence of earlier-phase decisions. Manufacturers that have integrated security into design, build, and maintenance will find the documentation is largely a matter of writing down what they already do.

Documentation, Conformity, and the 10-Year Retention Bar

Every manufacturer placing an in-scope product on the EU market must assemble, hold, and on request produce a technical documentation package demonstrating conformity with Annex I. Annex VII enumerates the required contents. The package is not optional and is not a one-time artifact — it has to be kept up to date across the product's lifecycle and retained for at least ten years after the product is placed on the market, or for the product's support period, whichever is longer.

Annex VII is specific about what the documentation contains. At minimum: a general description of the product; a design and manufacturing description; an assessment of the cybersecurity risks against which the product is designed, developed, produced, delivered, and maintained; the list of Annex I requirements applicable and how they are satisfied; the test reports demonstrating conformity; descriptions of the vulnerability handling process; an EU declaration of conformity; and the harmonized standards applied, or if no harmonized standards are applied, a description of the solutions adopted to meet the essential requirements.

Reading this list carefully reveals what makes the CRA documentation requirement structurally different from most compliance documentation. The regulation asks for evidence of decisions, not just evidence of outcomes. "The product uses AES-256-GCM" is an outcome. "The product uses AES-256-GCM because the risk assessment identified confidentiality of stored user records as a primary protection target, the threat model includes offline database exfiltration, the performance budget permits AEAD, and no deprecated alternatives were appropriate" is a decision. The documentation package has to capture the decision-level reasoning for each applicable Annex I requirement.

The ten-year retention obligation interacts with engineering team turnover in a way that most companies underestimate. A product shipped in December 2027 with an Annex I documentation package prepared by an engineering team that has half rotated by 2030 requires that the documentation be readable, auditable, and maintainable by the team that inherits it. Organizations that produce documentation as a last-minute artifact written by one or two senior engineers and filed in a share that nobody opens will find those documents are unusable by the team that owns the product when the first regulator request arrives in year four.

For important products (Annex III) and critical products (Annex IV), third-party conformity assessment means a notified body reviews the documentation and either grants EU-type examination or denies it. The notified body will ask for evidence that the security testing described in the package actually happened — test reports, tool outputs, remediation evidence — and that the secure coding training or equivalent competence development described for the development team was delivered to the people whose names appear on the commits. Documentation that claims practices the organization does not actually perform creates a worse regulatory exposure than documentation that honestly describes a more limited program.

Open Source Maintainers: The Stewarding Carve-Out

The CRA's treatment of free and open-source software went through several iterations during the 2023-2024 legislative process. The final text distinguishes three situations. Free and open-source software released outside the course of a commercial activity is excluded from the manufacturer obligations entirely. Free and open-source software that is integrated into a commercial product is in scope indirectly — the commercial manufacturer is the one accountable for the conformity of the integrated product, including the open-source components it embeds. And a new category of "open-source software steward" was introduced to sit between these two: organizations that systematically support the development of open-source components used in commercial products have a reduced set of obligations focused on cybersecurity policy and cooperation with authorities, without the full manufacturer conformity burden.

Pure volunteer maintainers of an open-source library released on GitHub with no commercial tie are not CRA manufacturers. The regulation is explicit on this. An individual developer who publishes a utility library on npm and has no commercial engagement with its use does not have to stand up a CVD policy, publish SBOMs, or handle Chapter IV reporting. This is the carve-out that the OpenSSF, Linux Foundation, Apache, and Eclipse lobbied hardest for during the drafting process, and it made it into the final regulation substantially intact.

Organizations that are not individual hobbyists but exist to systematically support open-source development — foundations, employer-backed consortia, corporate open-source program offices that release software as a service to the ecosystem — fall into the steward category. The steward obligations are lighter than the manufacturer obligations. Article 24 describes them: implement and document a cybersecurity policy fostering the development of secure products with digital elements; facilitate the voluntary reporting of vulnerabilities; cooperate with market surveillance authorities on request.

Where it gets complicated is the gray zone. A company that maintains an open-source framework while also selling a commercial product built on top of that framework is both a steward of the framework and a manufacturer of the commercial product. A company that contributes to upstream projects as part of its commercial product development is not a steward of those upstream projects, but its commercial product is still subject to the full manufacturer regime including whatever it embeds from upstream. The practical effect is that most companies will interact with the CRA as manufacturers, with the steward category carved out for a specific subset of organizations whose primary mission is open-source support.

For engineering teams that depend heavily on open-source components — which is to say, nearly all of them — the operational consequence of the carve-out is that the commercial manufacturer bears the burden of establishing the security posture of the combined product, including the third-party components. This is why SBOM and SCA tooling become table-stakes rather than optional under the CRA. If a critical vulnerability is found in an open-source dependency, the commercial manufacturer is the entity that has to report the exploitation under Article 14, deliver the security update under Annex I Part II 2.8, and document the remediation in the technical documentation. The upstream maintainer — if they are a pure volunteer — has no CRA obligation in that chain.

Penalties and Market Consequences

The CRA's penalty regime scales with the severity of the breach and the size of the entity. Non-compliance with the essential cybersecurity requirements of Annex I or the vulnerability handling obligations exposes the manufacturer to administrative fines of up to 15 million euros or 2.5% of total worldwide annual turnover for the preceding financial year, whichever is higher. Non-compliance with other obligations of the regulation — for example, the CE marking and declaration of conformity requirements — exposes the manufacturer to fines of up to 10 million euros or 2% of turnover. Providing incorrect, incomplete, or misleading information to notified bodies or market surveillance authorities can incur fines up to 5 million euros or 1% of turnover.

Administrative fines are one enforcement lever. The more immediate one for most organizations is market surveillance. National authorities have the power to order the withdrawal of non-compliant products from the EU market, require corrective measures, and prohibit the placing on the market of products that fail the conformity requirements. A product withdrawal order effectively terminates the product's presence in the single market until the manufacturer demonstrates conformity. For a SaaS product accessed from the EU, the withdrawal is typically implemented by requiring the provider to block EU access or to remediate the non-compliance within a defined window.

The reputational and commercial consequences of a withdrawal order or a high-severity penalty will in most cases exceed the direct regulatory cost. EU procurement — public sector and regulated industry — will increasingly require evidence of CRA conformity as a prequalification condition. Large EU customers will push CRA compliance terms into supplier contracts, with audit rights and indemnification clauses that make a single regulator finding cascade across the customer base. By 2028, CRA compliance evidence will be a standard checkbox in RFPs from EU buyers, and non-compliance will not just expose organizations to fines — it will exclude them from the market.

A Readiness Checklist: What to Have in Place by Each Deadline

An engineering organization that starts in mid-2026 has a feasible path to both the September 2026 and December 2027 deadlines. An organization that starts in 2027 does not. The checklist below is the minimum viable set — not a maturity aspiration, just the items whose absence is a direct compliance gap.

- Named PSIRT or equivalent function. Operational, with after-hours coverage, escalation paths documented. The function that receives external vulnerability reports, triages exploitation signals, and coordinates internal response.

- Exploited vulnerability detection pipeline. Ingestion of external intelligence (CVE feeds, exploitation trackers, customer reports, honeypot or telemetry data) that will surface active exploitation of vulnerabilities in shipped products within 24 hours.

- Regulatory notification playbook. Pre-approved template and workflow for submitting Article 14 early warnings (24h), vulnerability notifications (72h), and final reports (14d) to ENISA and the relevant CSIRT. Legal and communications pre-aligned on content.

- Severe incident playbook. Parallel workflow for Article 15 — early warning (24h), incident notification (72h), final report (1 month). Tied to internal incident classification criteria that identify which internal incidents meet the CRA's "severe" threshold.

- Product inventory with identifiable units. A list of products placed on the EU market, with product identifiers specific enough to allow reporting to name the affected products unambiguously.

- Coordinated vulnerability disclosure policy, published. Annex I Part II 2.5 — this is needed before Annex I applies in 2027, but establishing it in 2026 pays off in intake quality and is a prerequisite for the reporting workflow to function.

- Dry-run of the notification workflow. At least one tabletop exercise executed end-to-end, with the regulatory notification templates completed against a plausible scenario, before the September 2026 date goes live.

- Risk assessment for every in-scope product. Documented, versioned, referenced by the technical documentation package. Covers threat model, protection targets, security objectives, and the rationale for the Annex I interpretation.

- Annex I Part I conformance evidence for every product. One row per applicable requirement (1.1 through 1.13), with the specific design or implementation decision that satisfies it and the test evidence that verifies it.

- Annex I Part II process evidence. Documented vulnerability handling process, SBOM generation and maintenance practice, security testing cadence, disclosure policy, update mechanism, with artifacts demonstrating each.

- SBOM for every product. Machine-readable (SPDX or CycloneDX), current, regenerated at every release, retained for 10 years.

- Conformity assessment completed. Self-assessment for most products; internal production control plus EU-type examination for Annex III class II important products; European cybersecurity certification for Annex IV critical products.

- EU declaration of conformity drafted and signed. Per product, per version. Template ready for the December 2027 cutover.

- CE marking on product packaging, labeling, or accompanying documentation. Integrated into the manufacturing or software release pipeline so new releases carry it from the cutover date.

- Technical documentation stored in a system of record. Not "in a shared folder". A system with access controls, version history, and change logs, designed to survive 10 years of team turnover.

- Support period policy published. Per product, with justification if shorter than five years. Communicated to customers before or at purchase.

- Developer capability baseline. Evidence that the people writing code for in-scope products have access to and have engaged with secure coding training and application security training that covers the vulnerability classes relevant to the product and the languages in use. Not a CRA-specific requirement by name, but the mechanism by which Annex I Part I 1.2 and 1.10 outcomes actually get delivered in practice.

- Supply chain security controls. Dependency management policy, SCA tooling integrated into CI, approved-components list or equivalent, process for handling CVEs in dependencies with timelines aligned to the CRA's "without delay" language.

- Market surveillance response plan. Process for handling requests from EU market surveillance authorities — documentation production, cooperation obligations, timelines.

Closing: The Regulation Is Durable, The Deadline Is Not

The Cyber Resilience Act is the EU's answer to two decades of unmitigated cybersecurity failure in connected products. It is a horizontal regulation, it is now legally in force, and it is structurally similar to the CE-marking regime that has governed EU product safety for forty years — which is to say, it is durable. The regulation will not be watered down in the implementation phase, and it will not be delayed. The deadline in September 2026 is the deadline in September 2026. The deadline in December 2027 is the deadline in December 2027. Organizations that treat these as aspirational dates will discover in the second half of 2026 that they are not.

For engineering leaders trying to sequence the work, the practical planning frame is that the next fifteen months split into three tranches. The first six months are structural — stand up the PSIRT, draft the CVD policy, inventory products, align legal and communications on the notification workflow, run the tabletop. The middle tranche through mid-2027 is product work — the risk assessments, the Annex I mappings, the security testing, the SBOMs, the documentation. The final tranche is assembly and assessment — the technical documentation packages, the declarations of conformity, the CE marking integration, and for important and critical products, the interaction with notified bodies. Each tranche depends on the prior one, and the dependencies do not compress cleanly.

A mature secure development program — one where secure design, secure coding, security testing, and vulnerability handling are integrated into how the organization ships software — does most of what the CRA requires already. The work to become CRA-ready, for that organization, is largely a documentation and conformity exercise on top of practices that already exist. A less mature program has both the compliance work and the underlying engineering capability to build at the same time, and the latter is where the time compression is hardest to manage. Standing up security testing, developer security competence, and a working PSIRT in parallel, while also generating the documentation that describes them, is a twelve-to-eighteen-month investment for a mid-size organization, not a quarter-long project.

The companies that come out of this well will be the ones whose engineering organizations understood by mid-2026 that the CRA is not a compliance add-on — it is a forcing function to bring secure development into the core of how they build. The organizations that come out of this badly will be the ones that tried to bolt a compliance layer on top of an engineering practice that could not support it, discovered the gap during a conformity assessment, and had to remediate at the speed of a regulator's clock. The choice between those outcomes is being made right now, in the decisions about 2026 engineering priorities and budgets. If you are the person shaping those decisions, the CRA is the one that should be shaping them back.