Insecure Design — OWASP A04 — is the category that did not exist on the OWASP Top 10 until 2021 and that, more than any other entry, redefined what application security teams treat as a finding. Every other category on the list describes an implementation defect: a SQL string concatenated where a parameter belonged, a missing authorization check on an endpoint, a weak cipher chosen at the transport layer. Owasp insecure design describes something different — the absence of a security control that was never designed in the first place, the business logic that produces correct-looking code with broken outcomes, the architecture that no amount of secure coding can rescue because the architecture itself is the vulnerability. This guide walks the bright line between A04 and the rest of the list, the categories of design failures developers ship, the threat-modeling and secure-by-design practices that cure them, and the playbook for pushing security left from the code-review stage to the design stage where the leverage actually lives.

What Insecure Design Means and Why It Earned Its Own Slot

The OWASP Top 10 2021 introduced Insecure Design as a brand-new category at A04, and the 2025 revision keeps it there. The introduction was deliberate and, to many practitioners, overdue. The previous Top 10 lists had treated security failures as implementation defects almost without exception — every entry pointed at code that was incorrectly written, configuration that was incorrectly set, or libraries that were incorrectly chosen. The category that was missing was the failure that occurs before any code is written: the failure to design the system with the security properties it needs in the first place. Insecure design owasp was added to make that category visible and to give appsec teams a way to file findings against architectural decisions, not just implementation defects.

The reason a separate category was needed is that the prevention disciplines for design failures and implementation failures are fundamentally different. Implementation failures are caught by code review, by SAST, by DAST, by unit tests with security assertions, by linters. The remediation is local — change the function, add the check, swap the library. Design failures are caught by threat modeling, by architectural review, by security requirements analysis, by misuse-case workshops. The remediation is structural — change the data flow, redesign the trust boundary, redraw the component diagram. A team that has invested heavily in code-level controls and has never invested in design-level controls produces an application that scores well on every static-analysis tool and still ships catastrophic business-logic vulnerabilities.

The 2021 OWASP guidance frames the category as "missing or ineffective control design," which captures the operational definition: A04 covers the cases where the control needed to defend against a threat was either never identified during design or was designed in a way that does not effectively defend. The category is not about controls that were designed correctly and implemented incorrectly — those are A01 (broken access control), A02 (cryptographic failures), A03 (injection), or one of the other implementation-focused categories. A04 is the meta-category that asks: did anyone consider, at design time, that this threat existed and design a control for it?

The category's persistence at A04 in the 2025 revision reflects an industry consensus that has stabilized: design-stage security is the highest-leverage investment a team can make, and the absence of design-stage practices remains the most common gap in mature programs. Teams that have closed their injection surface and tightened their access control still ship insecure designs, because the discipline that prevents A04 is qualitatively different from the discipline that prevents the categories below it.

Insecure Design vs Insecure Implementation — The Bright Line

The single most useful frame for thinking about A04 is the distinction between design-time failures and code-time failures. The bright line runs through every appsec finding: was the bug introduced when the system was designed, or when the code was written? The answer determines which category the finding belongs to and which remediation discipline applies.

Consider a money-transfer endpoint that allows a user to transfer funds between accounts. The endpoint reads the source account, checks that the user owns it, deducts the amount, and credits the destination. The code is well-written: parameterized queries, authorization check, exception handling. A security researcher discovers that two concurrent requests with the same source account can both pass the balance check, both deduct, and both credit — overdrawing the source account beyond its actual balance. The bug is real, the impact is real, and the code itself is not "wrong" by any narrow measure. It is the design that did not specify how concurrent transfers should be serialized, did not specify what consistency model the transfer ledger requires, did not specify whether the balance check and the deduction must occur atomically. That is A04. The fix is not in the code that already exists; the fix is in the design that should have specified locking semantics or idempotency keys or transactional isolation from the start.

Compare this to a sibling endpoint that builds a SQL query by concatenating the destination account number into a string. The bug there is implementation: the design specified that destination accounts are looked up by ID, the code chose a SQL pattern that exposes the lookup to injection, and the fix is parameterization. That is A03, sibling category, different discipline. Both are real bugs, both produce real incidents, but the root cause and the fix live in different phases of the SDLC.

The bright line matters because it determines who owns the fix and what the remediation timeline looks like. Code-time bugs can be fixed in a sprint by changing the implementation. Design-time bugs frequently cannot be fixed without changing the contract between components, which means coordinating with downstream consumers, planning a migration, and shipping the fix over multiple releases. A team that treats every A04 finding as a code change and then discovers the change requires data-model migration is the team that has not yet internalized the difference. A team that treats every A04 finding as an architectural review is the team that does design-stage security correctly.

The principle that emerges from the distinction is the one OWASP itself names: secure by design. Security properties are not added to a system after the fact; they are properties the design has from the beginning, expressed through the architecture, the data flows, the trust boundaries, and the contracts between components. The implementation enforces what the design specifies. When the design specifies nothing, the implementation cannot enforce it correctly even when the developer is conscientious.

The Categories of Design Failures

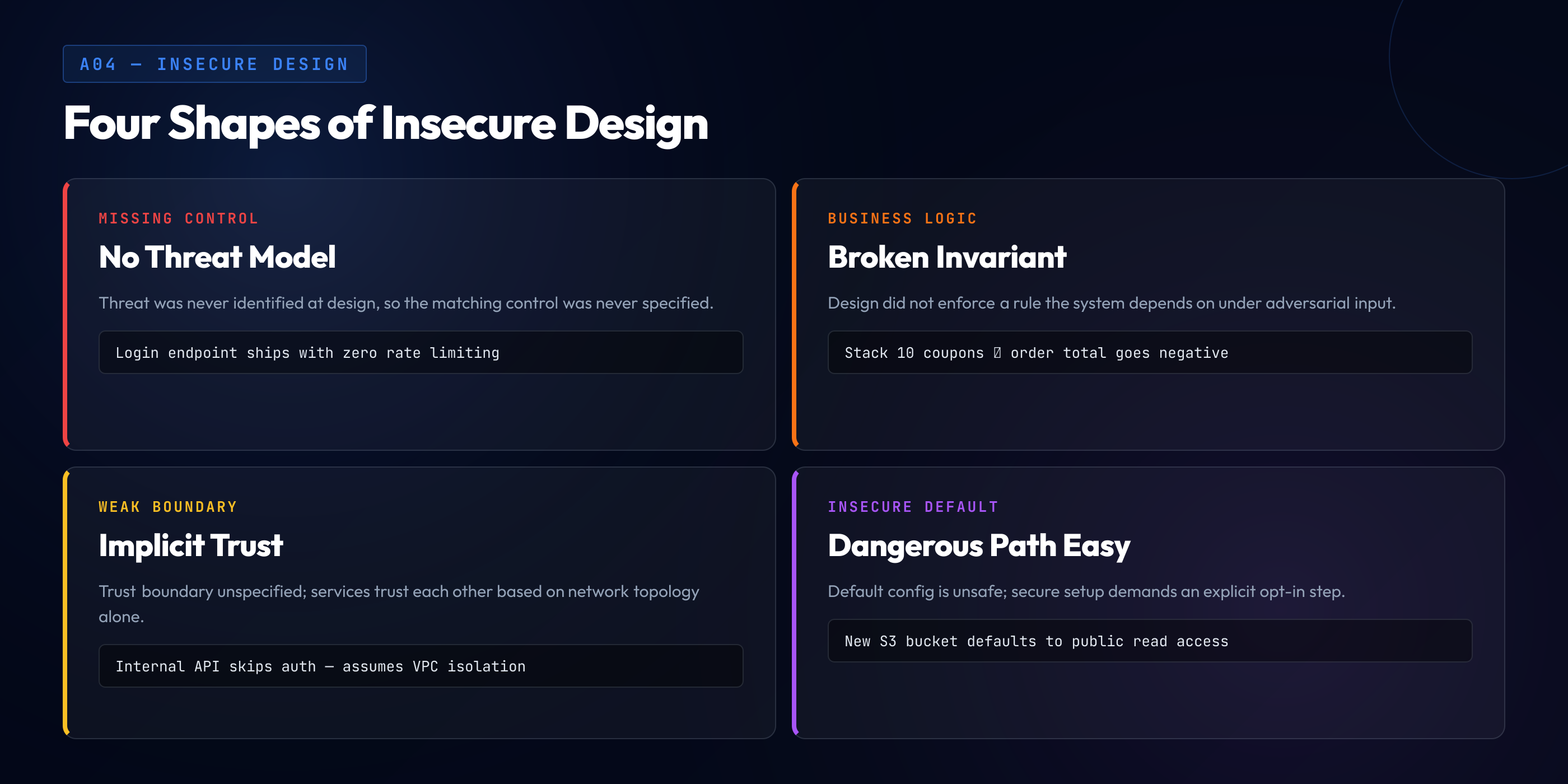

A04 covers a large category, and the failures inside it cluster into a few recurring shapes. Recognizing the shape of a design failure is the first step to remediating it; each shape has its own design pattern that addresses it.

Missing security controls by design. The system needs a control to defend against a threat, the threat was not identified during design, and the control was therefore not specified. The application has no rate limiting on its login endpoint because rate limiting was not in the requirements. The application has no audit log on its admin actions because audit logging was not in the design. The application has no MFA enrollment flow because MFA was deferred to "phase 2." Each is a missing-control failure where the control's absence is the vulnerability — there is no broken code to fix, only an absent design.

Business logic flaws. The system's logic — the rules that govern how its business operates — has gaps that an attacker can exploit. A coupon system that does not constrain how many coupons can stack on a single order. A refund flow that does not check whether a refund has already been processed. A loyalty program that does not deduct points when they are spent. A subscription system that does not handle the case where a subscription is canceled mid-billing-period. Each is a design that did not anticipate an adversarial input or did not specify the invariant that should hold across all states. Business logic flaws are the largest single sub-category of A04 and the hardest to detect with automated tools because they require understanding what the application is supposed to do, not just what it does.

Weak threat model. The team performed threat modeling but performed it incompletely — the model identified some threats and missed others, identified controls for the threats it found but did not stress-test those controls against creative abuse. A weak threat modeling for design exercise that lists STRIDE categories but does not actually walk the data flows, that produces a checklist artifact but does not produce architectural decisions, that the team performs once at project kickoff and never revisits as the system evolves. The threat model exists; it is not adequate.

Missing rate limiting and anti-automation. A specific shape of missing-control failure that deserves its own category because it is so common. Login endpoints without rate limiting, password-reset endpoints without throttling, signup endpoints without bot detection, search endpoints without query budgets, API endpoints without per-user quotas. The absence of anti-automation controls converts every endpoint into a brute-force surface and turns ordinary functionality into a denial-of-service vector. The 2026 pentest pattern is consistent: for every ten endpoints audited, six lack effective rate limiting, four lack effective anti-automation, and the team's response to the finding is "we'll add it" — confirming the design did not specify it.

Insecure defaults. The system's default configuration is insecure, and the user must take an explicit action to make it secure. A storage bucket that defaults to public access. A user account that defaults to admin privileges until explicitly downgraded. A logging system that defaults to logging request bodies including credentials. An API key that defaults to all-scopes-enabled until explicitly scoped. The pattern is to ship the dangerous default as the easy path and the safe configuration as the path requiring effort — every user who does not perform the explicit secure-configuration step ships an insecure system. Secure by design inverts the default: the easy path is the safe path, and the dangerous configuration requires explicit opt-in.

Business Logic Flaws — Real Examples

Business logic flaws deserve a dedicated section because they are the most difficult-to-detect, highest-impact subcategory of A04 and because their patterns recur across industries with surprising consistency. The following are real classes of incidents that map cleanly to insecure design — the underlying flaw is in the design of the business process, not in the implementation of any single function.

Money transfer race condition. The classic example of an insecure-design business logic flaw, and one of the highest-impact in financial services. Two concurrent requests perform a balance check on the same account, both pass, both deduct, and the account is overdrawn. The vulnerable Python pseudocode looks structurally correct but is not safe under concurrency:

def transfer(source_id, dest_id, amount, user):

source = db.query(Account, source_id)

if source.owner != user:

raise Forbidden()

if source.balance < amount:

raise InsufficientFunds()

# Race window: another transfer can pass the

# balance check before this one updates the row.

time.sleep(0.001) # representing any latency

source.balance -= amount

dest = db.query(Account, dest_id)

dest.balance += amount

db.commit()Two requests arriving in the race window both observe the original balance, both pass the check, and both deduct — the second deduction succeeds because no row-level lock prevents the interleaving. The fix is at the design layer: the transfer must hold an exclusive row lock on the source account from the moment of the balance check until the moment of the deduction, or the transfer must be expressed as a single atomic database operation that cannot be interleaved.

def transfer(source_id, dest_id, amount, user):

with db.transaction(isolation='SERIALIZABLE'):

# SELECT ... FOR UPDATE: row lock until commit

source = db.query(Account, source_id, for_update=True)

if source.owner != user:

raise Forbidden()

if source.balance < amount:

raise InsufficientFunds()

source.balance -= amount

dest = db.query(Account, dest_id, for_update=True)

dest.balance += amountThe fix is structural: the design specifies that transfers serialize through a row lock, and the implementation enforces what the design specifies. Without the design specification, every implementation is vulnerable regardless of how carefully the developer writes the function.

Coupon stacking. An e-commerce system allows users to apply discount coupons to orders. The coupon validation function checks that each individual coupon is valid, has not expired, and has not been used. It does not check, because the design does not specify, how many coupons may be applied to a single order. An attacker discovers that ten coupons can be stacked, each providing a 20 percent discount, and the total discount exceeds 100 percent — producing a negative-cost order the system happily processes.

The vulnerable design has each coupon validated independently:

def apply_coupons(order, coupon_codes):

for code in coupon_codes:

coupon = lookup(code)

if coupon.valid and not coupon.used:

order.total -= coupon.amount

coupon.used = True

return orderThe fix is a design constraint: the order can have at most one coupon, or the order's discount cannot exceed a maximum percentage of the subtotal, or specific coupon types are mutually exclusive. The constraint is expressed in the design and enforced in the code:

def apply_coupons(order, coupon_codes):

if len(coupon_codes) > 1:

raise BusinessRule('Only one coupon per order')

code = coupon_codes[0]

coupon = lookup(code)

if not coupon.valid or coupon.used:

raise InvalidCoupon()

discount = min(coupon.amount, order.subtotal * 0.5)

order.total -= discount

coupon.used = TrueInsecure account-recovery flow. Account-takeover attacks routinely target the account-recovery flow because the recovery flow is, by design, an alternate path to authentication. A vulnerable recovery flow accepts an email address, generates a recovery token using a weak source of entropy, and emails the token to the user. An attacker who can predict or brute-force the token takes over the account. The vulnerability is not in the email-sending code; it is in the design of the recovery protocol.

# Vulnerable: predictable token, no rate limit, single factor

def request_recovery(email):

user = find_user_by_email(email)

if user:

token = str(int(time.time())) + str(user.id)

save_token(user.id, token)

send_email(email, f"Recovery: /reset?t={token}")Three design flaws compound: the token is derived from predictable values an attacker can enumerate, the endpoint has no rate limit so brute-forcing is unimpeded, and email-only recovery means that compromise of the user's email account is sufficient to compromise every other account. The fix is an architectural redesign of the recovery protocol:

def request_recovery(email):

enforce_rate_limit(email, max_per_hour=3)

user = find_user_by_email(email)

if not user:

return # do not leak whether email is registered

token = secrets.token_urlsafe(32) # 256 bits of entropy

expires_at = now() + timedelta(minutes=15)

save_token(user.id, hash(token), expires_at)

send_email(email, f"Recovery: /reset?t={token}")

# Reset endpoint additionally requires a second factor

# (existing TOTP or hardware key) before changing the password.The redesign embodies several secure design patterns: high-entropy tokens, rate limiting, token expiry, hashing tokens at rest, and a second factor as a requirement before the recovery completes. Each is a design decision; together they produce a recovery flow that does not collapse to email security.

Refund abuse and idempotency failures. A payment system processes refunds. A refund request is submitted, the refund is authorized, the funds are credited back to the customer. If the same refund request is submitted again — through a retry, a deduplication failure, or an attacker resubmission — the system processes it again. The customer receives a second refund for an order that was only paid for once. The design did not specify idempotency keys, did not specify a unique constraint on refund identifiers, did not specify that refunds must check whether the order has already been refunded.

The fix is an idempotency key threaded through the refund flow: every refund request carries a client-generated unique identifier, the server stores the result of the first request keyed by that identifier, and subsequent requests with the same identifier return the stored result without reprocessing. The pattern is well-known in payment systems and ubiquitously absent in younger systems whose designers have not encountered the abuse pattern. The remediation is a design decision applied at the API layer, not a code change to the refund function.

Threat Modeling as the Cure

The cure for insecure design is threat modeling — the discipline of systematically enumerating, during the design phase, what threats apply to the system being designed and what controls address each threat. The discipline is decades old, has multiple methodologies, and is the single highest-leverage investment a team can make against A04. Our threat modeling deep dive walks the methodologies in detail; this section places threat modeling in its role as the A04 remediation.

STRIDE — the framework most commonly applied. STRIDE is Microsoft's threat-categorization mnemonic: Spoofing, Tampering, Repudiation, Information disclosure, Denial of service, Elevation of privilege. The methodology applies STRIDE to each element of a data flow diagram — each external entity, each process, each data store, each data flow — and asks which of the six threats apply, which controls defend against each applicable threat, and whether those controls are designed in. STRIDE produces a structured enumeration that catches the threats developers tend to miss when they think about security ad hoc.

A small STRIDE walkthrough on a payment endpoint illustrates the discipline. The data flow is a customer's browser, talking to a payment-API process, talking to a payments-database data store, with a separate flow to an external payment-processor entity. Apply STRIDE to each:

Data flow: Browser → Payment API

S: Can the request be spoofed?

→ Authentication (session, OAuth) required.

T: Can the request be tampered in transit?

→ TLS required, payload signing for high-value ops.

R: Can the user repudiate the action?

→ Audit log with non-repudiable identity binding.

I: Can the request leak info?

→ No PCI data in URLs, headers logged scrubbed.

D: Can the endpoint be DoS'd?

→ Rate limiting, per-user quotas, circuit breaker.

E: Can a normal user escalate?

→ Authorization check on every action.

Data store: Payments DB

T: Can rows be tampered with directly?

→ DB account least-privileged, write audit triggers.

I: Can rows leak?

→ Encryption at rest, column-level encryption for PAN.

...The output is a list of identified threats and either confirmed-existing controls or required-but-missing controls. Each missing control is an A04 finding and a design ticket. The methodology takes a few hours per significant feature; it consistently catches missing controls that no other discipline catches.

PASTA — Process for Attack Simulation and Threat Analysis. A heavier methodology than STRIDE that emphasizes attacker objectives and attack tree analysis. PASTA is appropriate for high-risk systems where the threat-actor sophistication is high and the consequences of compromise are severe. The methodology costs more time per feature than STRIDE; the depth of analysis is correspondingly higher.

Attack trees and abuser stories. A complementary discipline that flips the direction of analysis. Where STRIDE asks "what threats apply to this component," attack trees ask "how could an attacker achieve a specific goal." Abuser stories — the security analog to user stories — express attacks as narrative goals: "as an attacker, I want to take over an arbitrary user account so that I can access their data." The story is then traced through the system to identify which components and controls would need to fail for the attack to succeed. Abuser stories are particularly effective for surfacing business-logic flaws because they force the team to think about adversarial usage of the same flows ordinary user stories cover.

The cadence that produces results. Threat modeling at project kickoff produces a one-time artifact that goes stale. Threat modeling for every story above a complexity threshold — every new endpoint, every change to a trust boundary, every new external integration — produces a running document and a culture in which design-stage security is a habit. The cadence is what separates teams that ship insecure designs from teams that ship secure ones, and the cadence is qualitatively a different discipline than the security-review-at-the-end-of-the-release pattern most teams default to.

Secure Design Patterns to Reach For

Threat modeling identifies which controls a design needs. The library of secure design patterns is the menu the team chooses controls from. The patterns below are the canonical entries — each addresses a class of threats, each has a mature literature, and each should appear by name in design documents for systems that handle untrusted input or sensitive data.

Deny-by-default authorization. The default outcome of any authorization decision is deny. Permissions are explicitly granted; the absence of a grant is not implicit permission. The pattern produces architectures where new endpoints are inaccessible until explicit access rules are added — the inverse of the failure mode where a new endpoint is accidentally accessible because someone forgot to add the access check. Deny-by-default at the design layer is what eliminates whole classes of broken access control findings.

Defense in depth. No single control is the application's only defense. Each threat is addressed by multiple independent layers — input validation, output encoding, authorization, rate limiting, monitoring, anomaly detection — so that the failure of any single layer does not collapse the whole defense. Defense in depth is the principle that produces resilience under partial failure; an architecture that relies on one perfect control and no fallback is brittle, and the brittle architecture eventually loses to a single missed test case.

Principle of least privilege. Every component of the system runs with the minimum set of permissions it needs to perform its function. Database accounts have only the rights to query the tables they query. Service accounts have only the API scopes they call. User roles have only the actions they perform. The principle bounds the blast radius of any successful exploit: a compromised component can do only what its limited privileges allow, not what its theoretical maximum privileges would allow.

Fail securely. When a component fails — when a check throws, when a downstream service times out, when a configuration is missing — the failure mode preserves the security property. An authorization check that fails closed denies access. A signature verification that fails closed rejects the request. A feature flag that fails closed leaves the dangerous feature disabled. The opposite — fail open — produces architectures where partial failures grant unintended access.

Separation of duties. No single principal — user, role, service, component — has the authority to complete a high-impact action alone. Money transfers above a threshold require approval from a second user. Production deploys require approval from a release manager. Database schema changes require approval from a DBA. The separation produces architectures where a single compromise does not enable the full impact, and where audit trails can reconstruct who did what and who approved.

Secure defaults. The default configuration is the secure configuration. The dangerous configuration requires explicit opt-in. The pattern is the inverse of the insecure-defaults failure described earlier: every configuration knob the system exposes has a thoughtful default, and the defaults bias toward security rather than convenience.

Idempotency keys for state-changing operations. Every state-changing API call accepts a client-generated unique identifier; repeated calls with the same identifier produce the same result without reprocessing. The pattern eliminates duplicate-processing bugs, makes retries safe, and prevents an entire class of business-logic abuse. Idempotency keys are a design decision applied at the API contract layer, not an implementation detail of any single function.

Anti-Patterns Developers Reach For

Where the secure design patterns are the menu of correct choices, the anti-patterns are the menu of wrong ones. Each anti-pattern is observed routinely in pentests and design reviews, each has a plausible-sounding rationale that explains why a team chose it, and each produces an A04 finding with high confidence.

Security through obscurity. The system relies on the secrecy of its design — the URL of an admin panel, the format of an internal API, the location of a backup endpoint — for security. The rationale is "an attacker would have to know the URL." The flaw is that URLs leak through logs, browser history, error messages, GitHub commits, and ordinary web crawlers. Obscurity is acceptable as a thin defense-in-depth layer; it is not acceptable as the primary control.

Single layer of control. The system has exactly one line of defense for a given threat, and the team's argument for not adding a second layer is "we already have the first." The argument fails the day the first layer has a bug, and the day arrives reliably. Defense in depth exists because every individual control eventually fails; the architecture must survive the failure.

"We'll add auth later." The endpoint is built without authentication or authorization, with the plan to add it before launch. The plan slips, the launch happens, and the endpoint ships with no controls. The fix is structurally simple — add the controls — but the dependency on remembering to add them is exactly the kind of failure mode secure by design prevents. The design pattern is to make the framework refuse to expose endpoints that lack explicit auth declarations, so that "forgot to add auth" produces a startup failure rather than a production vulnerability.

Trusting client-side validation. The application enforces business rules — required fields, numeric ranges, character sets — in the client and not in the server, on the rationale that the client validates the data before it reaches the server. The flaw is that the client is under attacker control; the server cannot trust that client-side validation has run. Every server endpoint must enforce its own validation regardless of what the client does. Client-side validation is a UX feature for honest users; it is not a security control.

Optimistic concurrency without idempotency keys. The application performs state changes optimistically, with the assumption that two concurrent requests are unlikely. When two concurrent requests do occur — through retries, duplicates, or attacker concurrency — the application produces inconsistent state. The fix is design-level: every state-changing endpoint accepts an idempotency key, the server enforces uniqueness on the key, duplicate requests return the cached result. The pattern is well-understood in payment systems and underapplied elsewhere.

Implicit trust between microservices. Services within the same data center trust each other without authentication, on the assumption that the network boundary is the trust boundary. The assumption fails when a single service is compromised — the attacker now has an entry point into a network of trusting services with no further authentication required. The pattern that addresses this is zero trust: every service-to-service call is authenticated and authorized, regardless of network topology. Adopting zero trust as a design constraint eliminates the lateral-movement surface that implicit-trust architectures create.

Reference Architectures and Secure Design Libraries

A team that wants to ship secure designs does not start from a blank page. The industry has invested heavily in reference architectures, security-requirements catalogs, and design libraries that codify the secure design patterns into actionable, opinionated guidance. The following are the references mature appsec programs draw on.

OWASP ASVS — Application Security Verification Standard. ASVS is a comprehensive catalog of security requirements a web application should meet, organized by control category and assigned a verification level (1 = baseline, 2 = standard, 3 = high-assurance). ASVS is the closest the industry has to a definitive list of "what does a secure application need." Teams use ASVS as a security-requirements baseline: the design must demonstrate which ASVS requirements apply, which are met, and which are explicitly out of scope. ASVS does not prescribe an architecture; it provides the requirements against which any architecture is evaluated. The combination of an architecture and its ASVS coverage map is a common deliverable in security-conscious design reviews.

NIST SP 800-160 — Systems Security Engineering. A systems-engineering treatment of security as a property of systems, not as a layer added to systems. SP 800-160 is the heavyweight reference for high-assurance systems — government, defense, critical infrastructure — and provides the conceptual framework for treating security as a system property throughout the lifecycle. Teams shipping commodity SaaS rarely apply SP 800-160 directly; teams shipping systems with strong security requirements draw on its concepts and on the disciplines it codifies.

Microsoft Security Development Lifecycle (SDL). Microsoft's published SDL describes the security activities Microsoft applies during product development — threat modeling, security requirements, security design review, attack-surface analysis, security testing, security response. The SDL is one of the most extensively documented industrial security programs and is the model many other organizations have adapted. The SDL's threat-modeling guidance is, in particular, the primary published reference for STRIDE as practiced at scale. See our secure SDLC pillar for how SDL practices integrate into the broader development lifecycle.

Google SLSA — Supply chain Levels for Software Artifacts. A framework for the security properties of software supply chains, specifying levels of assurance for build provenance, source integrity, and artifact tamper-resistance. SLSA is more narrowly scoped than ASVS or SDL; it addresses one specific category of insecure design — supply chain trust — and provides a graduated path for hardening it. Adopting SLSA Level 3 or higher as a design requirement eliminates a class of supply-chain attacks that are otherwise structurally difficult to prevent.

Cloud-provider security architectures. AWS Well-Architected (Security pillar), Azure security baselines, Google Cloud security blueprints. Each cloud provider publishes opinionated reference architectures for common workloads — multi-tenant SaaS, regulated workloads, public-facing APIs — with the security controls and design patterns each architecture includes. Starting from a published reference architecture and customizing from there is materially less risky than designing from a blank page; the reference architecture has been reviewed by security teams who specialize in the cloud provider's primitives.

How A04 Findings Look in Pentests

The pentest signature of insecure design is distinctive once you know what to look for. Implementation findings — A03 injection, A05 misconfiguration — are surgical: they specify a parameter, an endpoint, a payload, an expected fix, and they typically remediate in a few lines of code. Design findings are sprawling: they describe a class of issues that recurs across endpoints, they specify an architectural change, they remediate in a coordinated cross-team effort, and the pentest report's longest sections are typically the A04 findings.

The phrase that auditors use most often for A04 findings is "the code is secure but the design is broken." The implementation of any individual function may be unobjectionable; the issue is at a higher abstraction level. The login endpoint correctly verifies passwords — but the system has no rate limit on login attempts, and the design did not specify one. The transfer endpoint correctly authorizes the user — but the system has no row-level locking on concurrent transfers, and the design did not specify it. The recovery endpoint correctly sends emails — but the recovery flow trusts email-only verification, and the design did not specify a second factor.

Pentest findings in the A04 category typically include three things the implementation findings do not. First, they describe a threat scenario — "an attacker who can submit concurrent requests can overdraw an account" — rather than a specific vulnerable line of code. Second, they describe an architectural recommendation — "transfers must serialize through a row-level lock" — rather than a specific code change. Third, they describe a class of related issues — "this race-condition pattern likely applies to other balance-changing endpoints" — rather than a single isolated bug. The remediation timeline reflects the architectural scope: A04 findings often remain open across multiple release cycles while the team executes the architectural change.

An adjacent observation: A04 findings produce the highest-impact incidents in the pentest report. The architecture-level vulnerabilities — race conditions, missing rate limits, insecure recovery flows, weak idempotency, broken business logic — are the ones that translate to real-world losses, real-world account takeovers, real-world fraud, real-world public incident reports. Implementation bugs produce the most numerous findings; design bugs produce the most expensive ones. A program that has invested in implementation hardening but has not invested in design hardening will see this asymmetry in its own pentest reports — many low- and medium-severity findings, with the criticals concentrated in the design category.

The OAuth confused deputy pattern, multi-tenant isolation failures in SaaS architectures that share a database without strong tenant scoping, capability-leakage between microservices in poorly-segmented networks, and credential-reuse across environments — these are the architectural failures that fill the cost-bucket. Each is an A04 finding by category. Each has a code-level expression somewhere, but the remediation is at the architectural layer, not in the code.

The Mitigation Playbook

The remediation discipline for A04 is structurally different from the remediation discipline for the implementation-focused categories. The disciplines below are the canonical playbook — applying them consistently is what distinguishes programs that close their A04 surface from programs that ship the same architectural failures release after release.

Push security left to the design phase. The leverage point for A04 is design review, not code review. By the time code is written, the design has already specified — or failed to specify — the controls the code must enforce. Investing in design-stage security review produces orders-of-magnitude better outcomes per hour than investing in code-stage review. The shift requires that security partners attend design discussions, that design documents include a security section, that design reviews include a threat-modeling step. The investment is real and the return is real.

Threat-model every story above a complexity threshold. Not every story needs threat modeling — a copy-update PR does not need a STRIDE walkthrough. Every story that introduces a new endpoint, modifies a trust boundary, adds a new external integration, changes the data model, or affects authorization does need threat modeling. The team agrees on the threshold and applies the discipline consistently above it. The cadence catches A04 findings during design rather than discovering them in pentest.

Ship secure-by-default architecture patterns. The framework, the platform, and the libraries the team builds on top of should make the secure path the easy path. New endpoints inherit authorization by default and require explicit opt-out. New database connections use parameterized queries by default. New external integrations require explicit security configuration. The pattern shifts the burden: developers no longer have to remember to add security; they have to remember to remove it if they truly need to. The shift dramatically reduces the rate of insecure-by-omission designs.

Build a misuse-case library. The team maintains a living catalog of business-logic abuse patterns observed in the industry, in the team's own pentest history, and in the threat-modeling backlog. Every new feature is evaluated against the library: does this feature have an analog in the library, what abuse patterns apply, what controls address them. The library is the institutional memory that prevents the team from rediscovering the same A04 findings every year.

Train designers, not just developers. The category of A04 implies a category of practitioner — the architect or staff engineer who makes design decisions. Security training that targets implementation patterns trains code authors; the gap is in training the people who make architectural decisions. Design-phase security training, threat-modeling training, secure-by-design pattern training are all qualitatively different from secure-coding training and require their own curriculum. Design-phase security training is the investment that addresses the A04 root cause.

Validate with TARA, PASTA, or equivalent risk-based methodologies. Threat-modeling methodologies vary in weight; TARA (Threat Assessment and Remediation Analysis), PASTA, and the lightweight rapid-threat-modeling approaches all have their place. The choice of methodology matters less than the consistent application of any methodology. Mature programs select a methodology, train the team on it, integrate it into the design-review process, and iterate on its effectiveness.

Connect design findings back to implementation discipline. A04 and the implementation categories below it are not in opposition; they are complementary. A team that has closed its A04 surface but has not closed its A03 surface still ships injection. A team that has closed its A03 surface but has not closed its A04 surface still ships business-logic abuse. The mature program invests in both, and the design-stage investment is what produces the leverage that the code-stage investment alone cannot reach.

Design Decisions Produce the Most Expensive Incidents.

A SAST tool can find a concatenated SQL string, a DAST tool can find a missing security header, but no tool will tell you that your money-transfer endpoint has a race condition or that your account-recovery flow trusts email when it should not. Those are design decisions, made before the code was written, and the only intervention that changes them is design-phase training and design-phase review. SecureCodingHub builds design-phase security training alongside our secure-coding curriculum — threat modeling, secure design patterns, misuse-case fluency — so that your architects ship the right design and your developers implement what the design specifies. If insecure design has been producing the longest sections of your pentest reports, we'd be glad to walk through how the program changes that input.

See the PlatformClosing: Secure by Design, or Not Secure at All

The introduction of insecure design as a top-level OWASP category in 2021 did more than add another row to a list. It made visible a category of failure the industry had been treating as out-of-scope for security tooling — a category that produces the largest, most expensive, hardest-to-remediate incidents in modern application security. The 2025 revision keeping A04 in place, and the 2026 industry consensus around the importance of design-stage security, reflect a recognition that secure coding alone is insufficient. A team that writes flawless code on top of a broken design ships a broken system. The corollary is that secure design without secure coding also fails — the design specifies controls the code must enforce, and the code must actually enforce them — but the order of investment matters. Design-stage failures are the multipliers; addressing them produces leverage that code-stage discipline cannot reach.

The patterns this guide describes — threat modeling as the cure, secure design patterns as the menu, anti-patterns to avoid, reference architectures to start from — are not novel. They have been in the industry literature for decades. The reason A04 remains in the OWASP Top 10 is not that the disciplines are unknown; it is that the disciplines are unevenly applied. Programs that have institutionalized threat modeling, that train architects on secure design, that ship secure-by-default platforms, that maintain misuse-case libraries — those programs ship secure designs. Programs that have not made those investments ship insecure designs no matter how much they invest in code-level security.

The engineering investment design-stage security requires is real. It is not free, it is not fast, and it is not the kind of investment that produces visible artifacts in the same way a SAST tool's dashboard produces visible findings. The return on the investment is measured in the absence of incidents — the breach that did not happen because the threat model identified the vector at design time, the business-logic abuse that was caught in misuse-case review before it reached production, the architectural failure mode that the secure-by-default framework prevented from being introduced. The teams that have made the investment know it; the teams that have not are reading their next pentest report and seeing where the longest sections concentrate.

OWASP A04 — insecure design — is the category that asks whether security was a property of the system from the beginning. The answer, for most systems shipping in 2026, is "partially." Closing the gap is the work of the next several years, and it is, dollar for dollar, the highest-leverage security investment a development organization can make.